Artificial intelligence is transforming healthcare by analyzing medical images in seconds, detecting diseases before symptoms appear, and helping doctors make treatment decisions backed by millions of data points. Machine learning algorithms now identify health risks earlier than traditional methods, while predictive analytics prevent medical errors and reduce administrative burdens on healthcare providers. These AI-powered technologies are revolutionizing medical diagnosis, treatment planning, drug discovery, and patient monitoring, delivering faster, more accurate, and more accessible care.

Beyond clinical applications, AI serves as an essential bridge between patients and healthcare systems through automated appointment scheduling, 24/7 patient support, medication reminders, and follow-up calls, keeping patients engaged with their treatment plans. This technology allows nurses and administrative staff to focus on complex care tasks while ensuring patients receive timely information and support. Healthcare facilities looking to streamline operations and improve patient engagement can explore Bland's conversational AI solutions.

Summary

- Nearly half of physicians report burnout symptoms, driven primarily by time spent on electronic health records, insurance authorizations, and administrative protocols rather than on patient care. The American Medical Association found that 46% of physicians experience burnout, a direct consequence of cognitive overload from systems that prioritize documentation over clinical thinking. This isn't a resilience issue. It's a structural problem where cardiologists answer 80 messages before seeing their first patient, leaving little capacity for the careful diagnostic work medicine requires.

- AI-powered imaging tools detect diseases like cancer with 95% accuracy, but the real value lies in their role as first-pass filters rather than replacements for radiologist judgment. These systems flag abnormalities requiring human review, prioritize urgent cases, and reduce the cognitive burden of scanning hundreds of normal studies. The outcome extends beyond faster reads to earlier detection when treatment windows remain wide, and prognosis stays favorable.

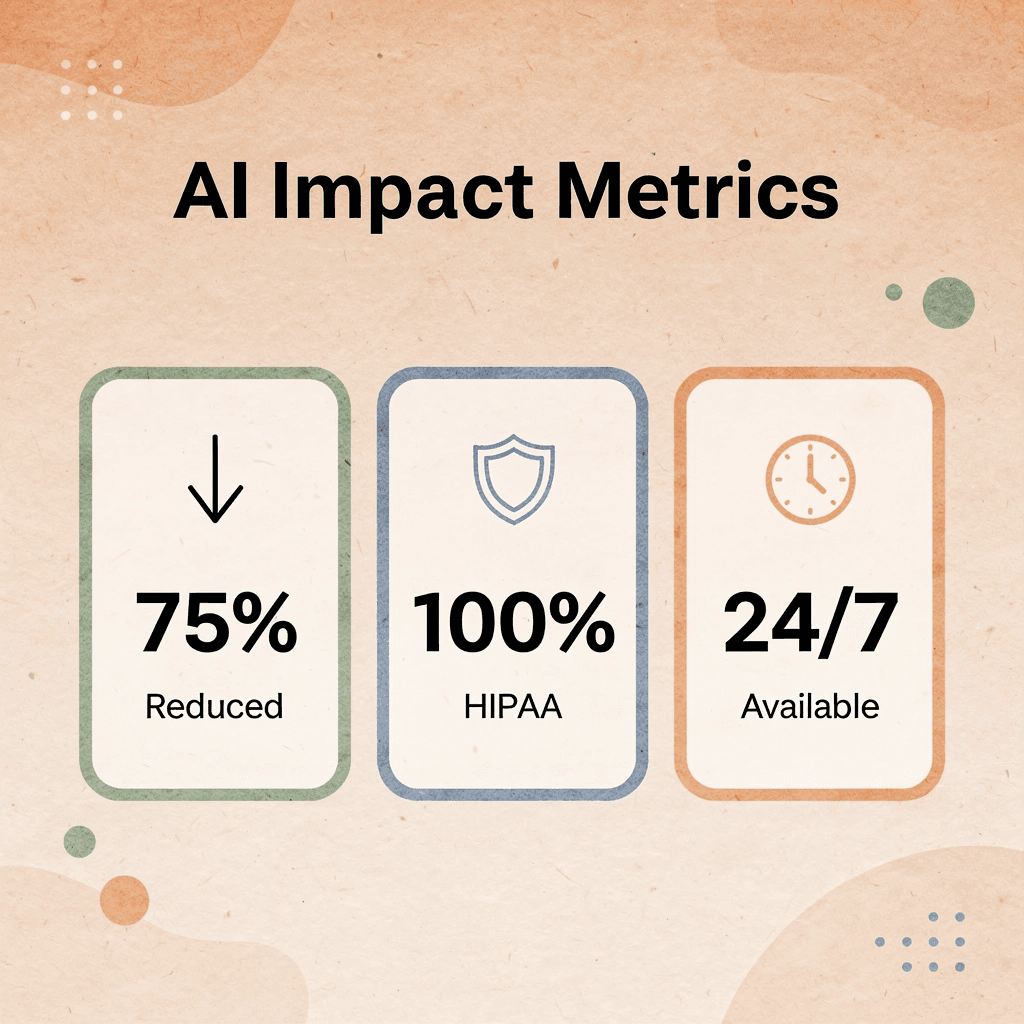

- AI chatbots handle up to 80% of routine patient inquiries according to SmartData Inc., addressing the operational bottleneck of appointment confirmations, prescription refills, and insurance questions that consume staff time daily. Conversational AI addresses this by managing high-volume phone interactions with sub-400ms response times and HIPAA-compliant infrastructure, freeing clinical staff for cases requiring human judgment while patients receive immediate, accurate responses.

- Predictive models tested in March 2025 missed 66% of cases where patients faced death risk from injuries and complications, revealing a critical gap between controlled environments and messy clinical reality. When AI fails to identify patients in crisis, the consequences aren't workflow disruptions or efficiency losses. They're preventable deaths. Healthcare operates in uncertainty with patients presenting incomplete histories and symptoms that don't follow textbook progressions, creating edge cases that matter most but fall outside what AI has seen before.

- Automation bias causes skilled pathologists under time pressure to override correct diagnoses 7% more often when AI provides incorrect recommendations. This phenomenon reveals a dangerous dynamic where tools designed to support clinical judgment instead undermine it. AI systems lacking explainability or transparency about their reasoning create false confidence, suppressing the very expertise they're meant to augment rather than supporting it.

- Healthcare organizations now allocate 15-20% of IT budgets to AI initiatives according to Vention Healthcare AI Statistics 2025, signaling this technology has moved from experimental to operational infrastructure. The shift addresses a fundamental capacity problem where data volume and complexity exceed what any clinician can process in real time, requiring systems that synthesize genetics, wearable data, and patient history into actionable patterns without attempting to replace the context, judgment, and communication that define medicine.

Why Traditional Healthcare Systems Are Struggling to Keep Up

Healthcare systems are breaking under the amount and difficulty of work that human workers alone cannot handle. The infrastructure was designed for fewer patients, simpler data, and more predictable workflows: a foundation that is now failing under modern demands.

🎯 Key Point: The fundamental mismatch between legacy healthcare infrastructure and current patient volumes creates a critical bottleneck that technology must address.

"Healthcare infrastructure was designed for fewer patients, simpler data, and more predictable workflows—a foundation that is now failing under modern demands." — Healthcare Analysis, 2024

⚠️ Warning: Without immediate technological intervention, healthcare systems will continue to experience workforce burnout and declining patient outcomes as demand outpaces capacity.

What's driving the physician burnout crisis?

46% of physicians report symptoms of burnout according to the American Medical Association. Doctors spend more time working with electronic health records, obtaining insurance approvals, and handling administrative tasks than speaking with patients. A cardiologist answers approximately 80 messages before seeing her first patient. This cognitive load eliminates the careful thinking that diagnosis demands.

How do staffing shortages affect patient care?

Staffing shortages exacerbate this problem. Insufficient staff reduces administrative capacity, consuming hours daily on scheduling appointments, patient follow-ups, prescription refills, and insurance verification calls. When 1 in 4 Americans delayed medical care due to cost, according to the Kaiser Family Foundation, some delays stem from systems that are too overwhelmed to answer phones, coordinate care, or explain costs clearly to patients.

Why does data volume exceed human processing capabilities?

Modern diagnostics create information faster than care teams can understand it. A single patient with chronic conditions may have lab results, imaging studies, specialist notes, medication lists, and vital sign trends spread across multiple systems. Radiologists review hundreds of scans weekly, each containing thousands of data points. They must synthesize this information, spot patterns, catch problems, and make time-sensitive decisions without missing anything critical.

What are the human limits in processing medical data?

Human attention and memory have limits. The number of variables a person can hold at once and compare against evolving clinical guidelines has a ceiling, and we've exceeded it. Diagnostic delays stem not from incompetence but from systems designed for analog workflows that manage digital-scale complexity.

How does phone volume create an operational burden?

Phone calls create a significant operational burden. Patients call to schedule appointments, reschedule, ask medication questions, clarify discharge instructions, request refills, and verify appointments. Each call requires staff to answer, access the appropriate system, document the interaction, and route the request elsewhere.

When you multiply that by thousands of patients per facility, hold times stretch to 20 minutes, with callbacks taking days. Platforms like conversational AI handle these high-volume interactions with response times under 400 milliseconds and HIPAA-compliant infrastructure, freeing clinical staff for cases requiring human judgment while patients receive immediate, accurate responses to routine questions.

Why can't current systems handle the demand?

The system, built for a pre-digital era, cannot scale to meet today's needs.

Related Reading

- How to Use AI in Customer Service

- How Does AI Improve Customer Service

- How Can Ai Help Customer Service

- AI in Customer Communications For Telecommunications

- How Is Ai Improving Restaurant Customer Service

- AI in Customer Communications in the Insurance Industry

- Ai Agent Challenge In Customer Support

- Ai Appointment Scheduling Healthcare

- Benefits of AI in Customer Support

- Ai Customer Support Implementation Best Practices

- How Ai Is Changing Customer Service In Telecom

How AI Is Already Improving Diagnosis, Treatment, and Patient Care

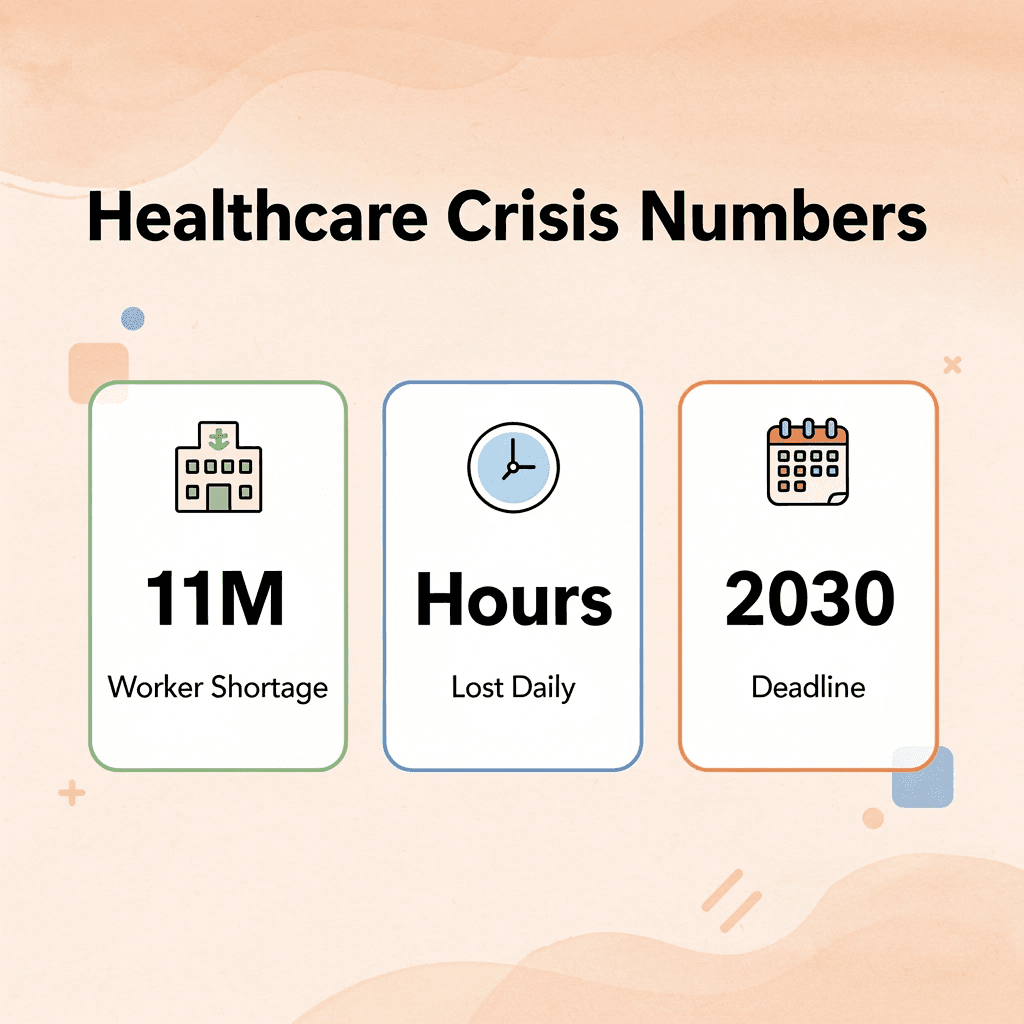

Healthcare systems face significant pressures: a projected shortage of 11 million health workers by 2030, clinicians losing hours daily to documentation, and long wait times worsening outcomes. Small fixes cannot solve these complex problems.

🎯 Key Point: AI is already being used in care delivery—from emergency departments to billing offices. Machine learning and predictive analytics are reducing the uncertainty that gaps between clinical knowledge and real-time action create.

"AI helps clinicians learn new approaches to treatment and diagnostic testing for some cases that can reduce uncertainty in medicine." — Dr. Maha Farhat, Massachusetts General Hospital

⚠️ Warning: What follows is where that impact is being felt most concretely: not where AI might help, but where it already is delivering measurable results in real healthcare settings.

Diagnostic Imaging: From Pattern Recognition to Clinical Decision Support

Radiologists face a volume problem that no amount of training can solve. A single chest CT generates over 300 images. Busy departments process hundreds of studies weekly, each containing thousands of data points, under constant time pressure, with expectations of zero missed findings. AI-powered imaging tools can detect diseases like cancer with 95% accuracy, according to SmartData Inc.'s 2025 research. These systems serve as a first-pass filter, flagging abnormalities for human review, prioritizing urgent cases, and reducing cognitive load. This enables earlier detection when treatment windows are wider, and outcomes are better.

Predictive Analytics: Catching Sepsis Before Symptoms Escalate

Emergency rooms face constant challenges in deciding which patients need care first. By the time sepsis shows clear warning signs—fever, low blood pressure, and confusion—the infection has already become serious.

AI systems track multiple measurements simultaneously, including heart rate, breathing patterns, white blood cell counts, lactate levels, and temperature. The system can spot sepsis risk hours before patients show symptoms, enabling doctors to start antibiotics and fluids early when treatment is most effective. One hospital system reported a 20% reduction in sepsis mortality after using predictive monitoring in their ICU beds.

Patient Engagement: Automating Routine Communication Without Losing Quality

Healthcare organizations handle thousands of appointment confirmations, prescription refill requests, insurance questions, and follow-ups after patients leave the hospital each day. These routine tasks consume staff time and create bottlenecks during high-volume periods, leaving patients waiting on hold with unanswered messages.

Platforms like conversational AI handle these interactions with fast response times (under 400 milliseconds) and HIPAA-compliant infrastructure, managing appointment scheduling, insurance coverage questions, and post-procedure check-ins through natural phone conversations. According to SmartData Inc., AI chatbots handle up to 80% of routine patient inquiries, freeing clinical staff to focus on complex cases while patients receive immediate, accurate responses outside business hours.

Pharmacogenomics: Matching Medications to Genetic Profiles

Bad reactions to drugs are a major reason people return to the hospital. These reactions are often predictable through genetic markers, drug interaction databases, and patient history, but analyzing all this takes hours that doctors cannot spare during a 15-minute appointment.

AI systems can process genomic data, medication history, and drug interactions in seconds, flagging high-risk combinations and suggesting alternatives based on a patient's genetic profile. This reduces adverse drug reactions and improves outcomes for patients whose bodies process medications differently. It's deployed in oncology centers where chemotherapy dosing must be precise, and in cardiology practices managing patients on multiple medications with narrow therapeutic windows.

Boosting Patient Engagement and Adherence

A prescription that doesn't get filled isn't a treatment. A care plan a patient doesn't understand isn't a plan. Non-adherence is one of the biggest problems in managing long-term diseases, and it rarely stems from a lack of willingness to follow the plan. It comes from friction: a missed reminder, confusing instructions, or no one available to answer a question at the right moment.

What role do wearables play in reducing patient friction?

Wearables and AI-powered devices reduce friction. Smartwatches and activity trackers collect real-time health data that keeps both patient and clinician informed between visits. Platforms like Livongo use AI to deliver personalized health nudges: targeted notifications that prompt specific decisions at the right moment, based on actual health data rather than generic reminders.

How does continuous monitoring improve care plan compliance?

When patients track their own metrics and receive real-time feedback, they stay more engaged. When they stay engaged, care plans work. This connection between continuous monitoring and actual compliance is where much of the practical value of AI in healthcare builds up.

Predictive Analytics and Risk Stratification

Healthcare has been organized around response: a patient gets worse, a crisis triggers, a team mobilizes. That model works until the deterioration is too fast, the team too stretched, or the signal is too subtle to catch without the right tools. AI-driven predictive models are reorganizing the logic toward anticipation. In ICUs and emergency departments, these systems analyze patient data in real time and flag sepsis risk hours before clinical symptoms worsen. Hours matter in sepsis. The difference between a flag at hour two and a flag at hour six is survivability.

How does risk stratification improve chronic care management?

For chronic care, AI sorts patient groups by readmission risk, directing resources toward those most likely to deteriorate before problems become readmissions and readmissions become crises. This resource shift delivers better care while making smarter use of a stretched workforce.

Clinical Chatbots to Guide Healthcare Decisions

Doctors make dozens of complex decisions daily, and AI systems that surface relevant evidence immediately are appealing. The risk lies in tools that sound authoritative but lack accuracy. A U.S. study tested standard large language models, including ChatGPT, Claude, and Gemini, on real clinical questions. They produced relevant, evidence-based answers 2–10% of the time. ChatRWD, a retrieval-augmented generation system that grounds LLM outputs in actual clinical data, answered 58% of questions usefully. The difference isn't the model but the architecture: whether the system retrieves real evidence or generates plausible-sounding text based on training data.

What results do patient-facing AI systems achieve?

At the patient level, the results are clearer. A 2024 World Economic Forum report on the digital patient platform Huma found it reduced readmission rates by 30% and cut patient review time by up to 40%. These improvements reflect a meaningful shift of clinical attention: away from routine monitoring and toward cases requiring human judgment.

Virtual Health Assistants and Chatbots

Most of the patient experience happens at home, between appointments, when questions arise without clear answers. That gap—between when instructions are given and when a patient needs them—is where people stop following their care plans. AI-powered virtual assistants fill that gap. Routine triage, symptom checking, medication reminders, and post-surgical care guidance don't require a clinician—they require accurate, accessible, real-time support at scale.

What benefits do chatbots provide for healthcare teams?

A patient recovering from a procedure can receive step-by-step guidance at 11pm without having to call an on-call nurse. When routine interactions are handled by AI, clinical staff focus on cases requiring medical judgment. That's allocating care correctly.

Operational Optimization

The quality of care doctors and nurses provide depends on how well the hospital operates. Insufficient staffing, poor bed organization, supply shortages, and billing errors that delay payments all affect patient outcomes. An understaffed ward cannot provide the same level of care as a fully staffed one, regardless of individual clinical skill.

How does AI optimize healthcare operational infrastructure?

AI improves the operational layer that enables everything else. Dynamic scheduling tools match worker availability to patient demand in real time, reducing understaffing patterns that accelerate burnout. Bed management systems optimize patient flow to eliminate bottlenecks. Supply chain logistics are streamlined to ensure materials are available when needed. Billing workflows are automated to reduce administrative errors that delay revenue cycles. None of this is visible to patients. Operational AI is the infrastructure beneath the infrastructure: without it, the clinical tools above lack the system stability they need to work.

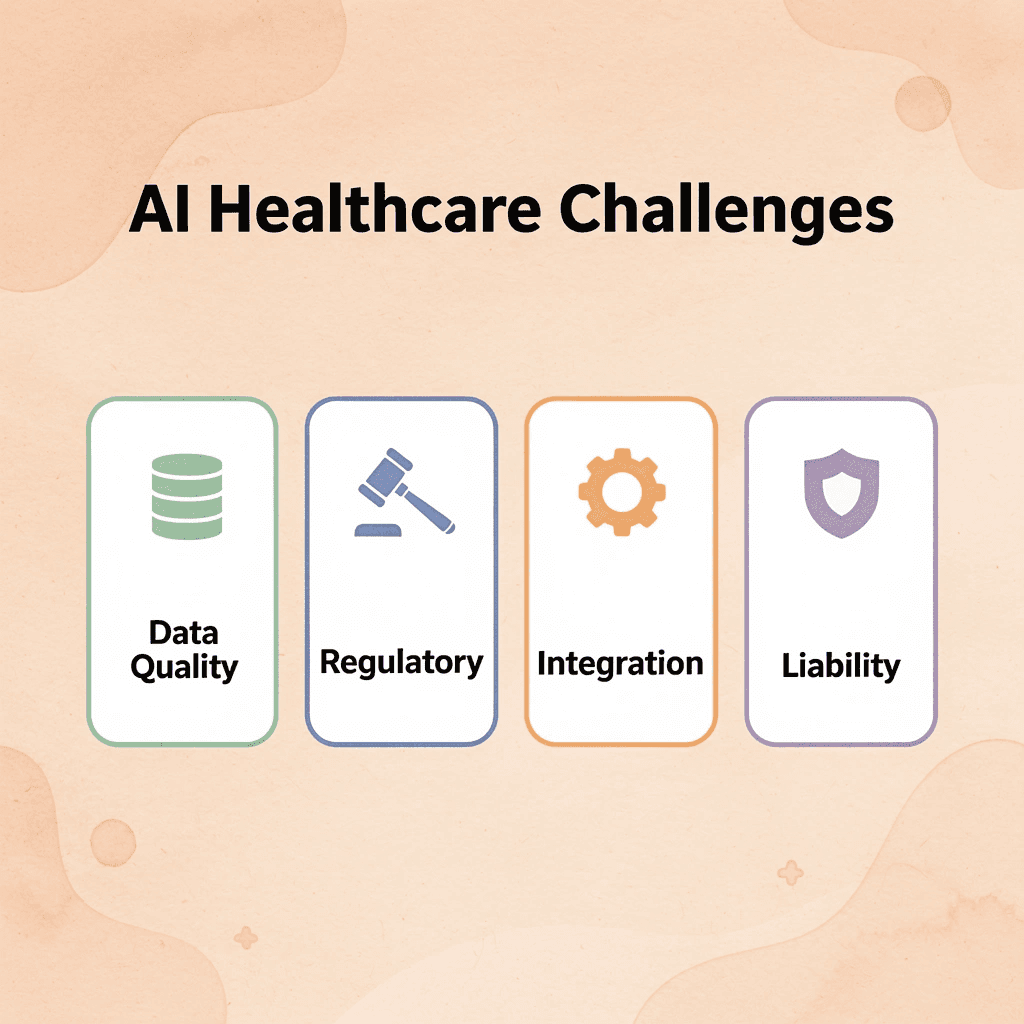

Where AI Still Falls Short in Healthcare

AI in healthcare has remarkable promise and significant risk. The gap between what these systems can do in controlled environments and what happens in real patient care is larger than most technology supporters acknowledge. Understanding these critical limitations is necessary for responsible deployment.

🎯 Key Point: The transition from laboratory success to real-world healthcare implementation reveals fundamental challenges that can't be solved with better algorithms alone.

"The gap between AI performance in controlled studies and real clinical settings represents one of the most significant barriers to widespread healthcare AI adoption." — Healthcare Technology Research, 2024

⚠️ Warning: Healthcare organizations that rush AI deployment without addressing these foundational issues risk patient safety and regulatory violations.

Why do predictive models struggle with critical healthcare scenarios?

A March 2025 study published in Communications Medicine evaluated machine learning models to predict the mortality risk of hospital patients. The results revealed a critical flaw: the models missed 66% of cases in which patients faced a risk of injury or complications.

What happens when healthcare AI fails to identify patients in crisis?

When AI fails to identify patients in crisis, the consequences aren't efficiency losses: they're preventable deaths. Predictive models work when data is clean and patterns are clear, but healthcare operates in uncertainty. Patients present with overlapping conditions, incomplete histories, and symptoms that don't follow textbook progressions. AI trained on historical data cannot account for what it hasn't seen before, and the edge cases in medicine are often the ones that matter most.

The Automation Bias Problem

Pathologists working under time pressure showed a troubling weakness: those who initially made correct diagnoses were 7% more likely to change their decision in favor of an incorrect AI recommendation. The system was designed to support clinical judgment rather than weaken it.

This automation bias reveals a dangerous pattern in high-stakes environments. Clinicians may rely on machines even when their instincts suggest otherwise. AI systems that lack explainability—those that don't show reasoning or acknowledge uncertainty—create false confidence. Clinicians need transparency, not predictions alone. Without it, the judgment AI is meant to improve gets suppressed.

When AI Inherits Our Inequities

AI doesn't come in neutral. It learns from data shaped by human decisions, and when that data reflects systemic bias, the algorithm amplifies it. An AI tool used across major U.S. health systems was found to recommend additional care for healthier white patients while underserving sicker Black patients. The model used healthcare costs as a proxy for healthcare needs. Because Black patients have historically received less care due to longstanding disparities—not because they needed less—the algorithm interpreted lower spending as lower need.

What happens when AI bias goes unchecked?

Without diverse training datasets, continuous auditing, and ethical review boards, AI doesn't just fail to reduce disparities—it embeds bias into systems, making it faster, more scalable, and harder to detect. The consequences shape resource allocation, treatment protocols, and access to life-saving interventions.

Why "AI Will Replace Doctors" Misses the Point

The belief that AI will replace doctors reflects a fundamental misunderstanding of what medicine requires. AI excels at pattern recognition: processing thousands of radiology images, identifying statistical correlations in patient data, and flagging anomalies faster than humans. But medicine isn't pattern matching. Its context, ethics, judgment, and communication. It's explaining a diagnosis to a frightened patient, weighing treatment options when evidence is unclear, and recognizing when a patient's lived experience contradicts what the chart says.

What makes healthcare impossible to fully automate?

Healthcare cannot become fully automated because medicine operates in an uncertain environment. Edge cases require contextual reasoning, and human trust matters when decisions involve risk, tradeoffs, and deeply personal values. AI enhances what clinicians do—it doesn't replace the parts of care requiring empathy, nuance, and the ability to sit with ambiguity. The real question isn't whether AI can do what doctors do, but whether we're designing AI to support the parts of medicine that matter most, or simply the parts that are easiest to automate. But even when AI is designed with the right intentions, it must function within systems not built for it.

Related Reading

- How To Improve Call Center Customer Service

- How to Use AI for Customer Service Call Center

- Best Ai Tools For Customer Support In Healthcare

- Ai Customer Support Automation In Telecom

- Best Ai Customer Support Tools For It Teams

- It Helpdesk Automation

- Patient Support Services Automation

- Best Ai Customer Service For Hotels

- How To Use Ai To Improve Customer Service Kpis

What the Future of AI in Healthcare Will Actually Look Like

Why is AI necessary in healthcare systems?

The volume and complexity of healthcare data exceed what any doctor can process in real time, making AI necessary. According to Vention Healthcare AI Statistics 2025, healthcare organizations allocate 15-20% of their IT budgets to AI projects. AI equips doctors with tools to synthesize genetic data, wearable data, and patient history into actionable patterns, enhancing clinical decision-making rather than replacing physicians.

How does AI augment clinical judgment?

Dr. Ben Shahshahani, Cleveland Clinic's Chief AI Officer, states: "AI is no longer an experiment. It's a real, scalable tool that can support patients, providers and health systems, improving outcomes, reducing stress for caregivers, and making care more accessible and efficient for everyone involved." AI works best when it supports clinical judgment rather than replacing it. The future depends on clear boundaries: algorithms excel at pattern recognition and data synthesis, while human clinicians remain essential for interpreting context, navigating ambiguity, and making final decisions with patients.

How will AI enable personalized medicine and early detection?

The biggest breakthroughs ahead will come from AI-powered personalized medicine and preventive care. With access to genetics, smartwatch data, and other patient information, AI can identify which treatments work best for individual patients and spot issues before they become urgent. "It's not possible for humans to absorb all of that information at once," Shahshahani explains. "But that's exactly what AI can do: sift through years of data and identify what's most important for that individual patient."

What role will remote monitoring and automation play?

Remote patient monitoring systems track vital signs continuously, flagging small changes that may indicate sepsis, heart failure, or diabetic complications hours before traditional symptoms appear. Workflow automation handles administrative tasks such as appointment scheduling, insurance verification, and follow-up reminders, freeing clinicians to focus on relationship-building and sound decision-making. The goal is to create space for the parts of medicine that require empathy, nuance, and the ability to sit with uncertainty.

Why does responsible AI implementation matter in healthcare?

Responsible use matters because the stakes are high. Researchers have raised ethical concerns about AI in healthcare, calling for stronger protections around patient privacy and more responsible data use. The World Health Organization and others have advised caution when using large language models like ChatGPT, Gemini, and Perplexity.ai for medical guidance.

These tools aren't built or approved for medical use and often make mistakes, introducing errors that can harm patients. "They're scanning public information, not your personal health history," Shahshahani clarifies. "Always bring your concerns to a doctor who can interpret the full picture and make safe, informed decisions with you."

What governance challenges do healthcare organizations face with AI?

Healthcare organizations face unpredictable behavior in AI systems, where outputs include margins of error similar to human judgment rather than the predictable logic of traditional IT systems. Current safeguards can be circumvented through social engineering. Governance requires more than technical safeguards: it demands cross-functional collaboration where legal, risk, and operations teams define acceptable AI behavior while keeping pace with evolving regulations. Traceable audit trails for every AI-generated output (who requested it, which model, data sources, and post-processing steps) are essential for regulatory scrutiny and accountability.

How can healthcare systems maintain compliance while scaling patient communication?

When healthcare systems automate patient communication at scale, maintaining compliance while preserving conversation quality becomes critical. Platforms like conversational AI enable organizations to handle high-volume phone calls with sub-400ms latency and HIPAA-compliant infrastructure, ensuring every interaction meets the empathetic, patient-centric standards the industry demands. This approach makes automation viable for mission-critical use cases where every call counts.

The human endpoint

Your healthcare team remains your best and most trusted resource for your care. AI enables teams to focus on what they do best: getting to know you, understanding you, and making sound decisions with you. The future isn't about choosing between human expertise and technological capability. It's about designing systems where AI handles data synthesis and pattern recognition while clinicians bring context, judgment, and compassion to every decision. The question isn't whether AI belongs in healthcare anymore, but whether we can see what responsible implementation looks like in practice.

See What AI-Powered Patient Communication Actually Looks Like

Responsible implementation solves real operational problems. Healthcare organizations face systemic bottlenecks: missed calls that lead to missed appointments, hold times that generate complaints, and support teams drowning in repetitive questions rather than handling complex cases. These affect both patient outcomes and staff retention.

🎯 Key Point: The critical difference is whether AI handles predictable conversations or tries to replace judgment calls. Conversational AI built for healthcare can answer appointment questions, route calls by urgency, and handle prescription refills instantly—without rigid phone trees or delayed voicemails. Our Bland platform offers self-hosted, HIPAA-compliant infrastructure that keeps patient data under organizational control while reducing the operational load that burns out support teams. This lets AI handle volume so staff focus on conversations where empathy and context matter.

"Healthcare organizations can reduce call handling time by up to 75% while maintaining HIPAA compliance through properly implemented AI voice agents." — Healthcare AI Implementation Report, 2024

💡 Best Practice: If your organization struggles with rising call volume or inconsistent response times, book a demo to see how AI voice agents deliver faster, scalable patient communication without sacrificing standards.

⚠️ Warning: The key is ensuring AI handles routine tasks while preserving human oversight for complex medical decisions and sensitive patient interactions.

Related Reading

- Best Ai Voice Agents For Insurance

- Relevance Ai Alternative

- Best Ai Tools For Insurance Customer Service

- Best Ai For Insurance Agents

- Lindy Ai Alternative

- Botpress Alternative

- Lindy Ai Vs Zapier