A customer reaches out at 2 AM with an urgent issue, and an AI agent confidently provides an incorrect answer. The customer gets stuck in an endless loop, unable to reach a human when the situation clearly requires one. The AI Agent Challenge in Customer Support extends far beyond simply implementing technology—it involves building systems that maintain accuracy across thousands of conversations, escalate gracefully when needed, and earn customer trust rather than eroding it.

Modern AI platforms address these obstacles by learning from actual customer interactions, improving response accuracy over time, and seamlessly transferring complex issues to human agents when appropriate. The right solution builds customer confidence through consistent, reliable interactions that feel natural and helpful. Companies looking to scale their support operations without typical growing pains can leverage advanced conversational AI to transform these challenges into competitive advantages.

Table of Contents

- Why AI Agents Struggle in Real Customer Support Environments

- 11 Key AI Agent Challenges in Customer Support Faced by Businesses During Deployment

- How to Overcome AI Agent Challenges and Deploy Reliable Support Systems

- AI Agent Failures in Customer Support Are Usually System Failures, Not Model Failures

Summary

- Enterprise AI agent deployments fail not because the technology lacks sophistication but because real customer conversations don't follow predictable patterns. According to a late-2023 Gartner survey, 83% of service leaders reported plans to invest in generative AI or were already doing so, yet most organizations remain stuck in early deployment stages. The gap between controlled testing environments and actual support tickets is where 95% of enterprise AI implementations collapse.

- Speed expectations create immediate failure points when AI agents can't scale under load. Ninety percent of consumers expect immediate responses to service inquiries, particularly on chat and messaging platforms. Delta Air Lines achieved an 85% reduction in response times by integrating Twitter and Facebook directly into their support infrastructure with robust feedback loops and channel integration. The lesson isn't just about speed but about building systems that maintain performance when thousands of simultaneous conversations hit the platform.

- Data security failures in AI systems cause legal and reputational damage, forcing rollbacks. The 2024 Ticketmaster breach exploited a third-party cloud provider to access 1.3 terabytes of sensitive customer data, went undetected for nearly seven weeks, and affected over 40 million users. According to the Cisco 2024 Data Privacy Benchmark Study, 91% of organizations agree they need to do more to reassure customers that their data is used only for intended and legitimate purposes when it comes to AI.

- Budget underestimation kills 37% of automation projects, according to Kissflow research. Mission Produce's 2021 ERP rollout ran nearly $4 million over budget due to underestimated complexity, reduced visibility into key metrics, a return to manual processes, and a nine-month delay. Pilots show promise in controlled conditions, but when companies try to roll out across multiple regions, languages, or product lines, engineering support, computing infrastructure, and licensing costs all scale faster than expected.

- Most AI agent failures aren't model failures but system failures rooted in poor integration architecture. When agents lack access to live CRM data, can't trigger proper escalation workflows, or respond without understanding ticket history, the model itself might be working perfectly while the infrastructure around it breaks down. One team reduced incorrect responses by 60% after switching from pure generative responses to a retrieval-first design that referenced real resolution patterns from past tickets instead of inventing solutions.

- Conversational AI addresses this by deploying real-time voice agents that maintain conversation history, trigger escalations based on sentiment and complexity thresholds, and automatically update support systems, rather than operating as isolated components alongside existing workflows.

Why AI Agents Struggle in Real Customer Support Environments

AI agents fail in customer support because real conversations don't follow demo scripts. Customers arrive frustrated, skip context, switch topics mid-sentence, and expect the system to understand what they mean rather than what they say.

🎯 Key Point: The gap between controlled demos and real-world chaos is where most AI customer support systems break down completely.

"Real customer conversations are messy, emotional, and unpredictable - everything that AI agents are not designed to handle effectively." — Customer Experience Research, 2024

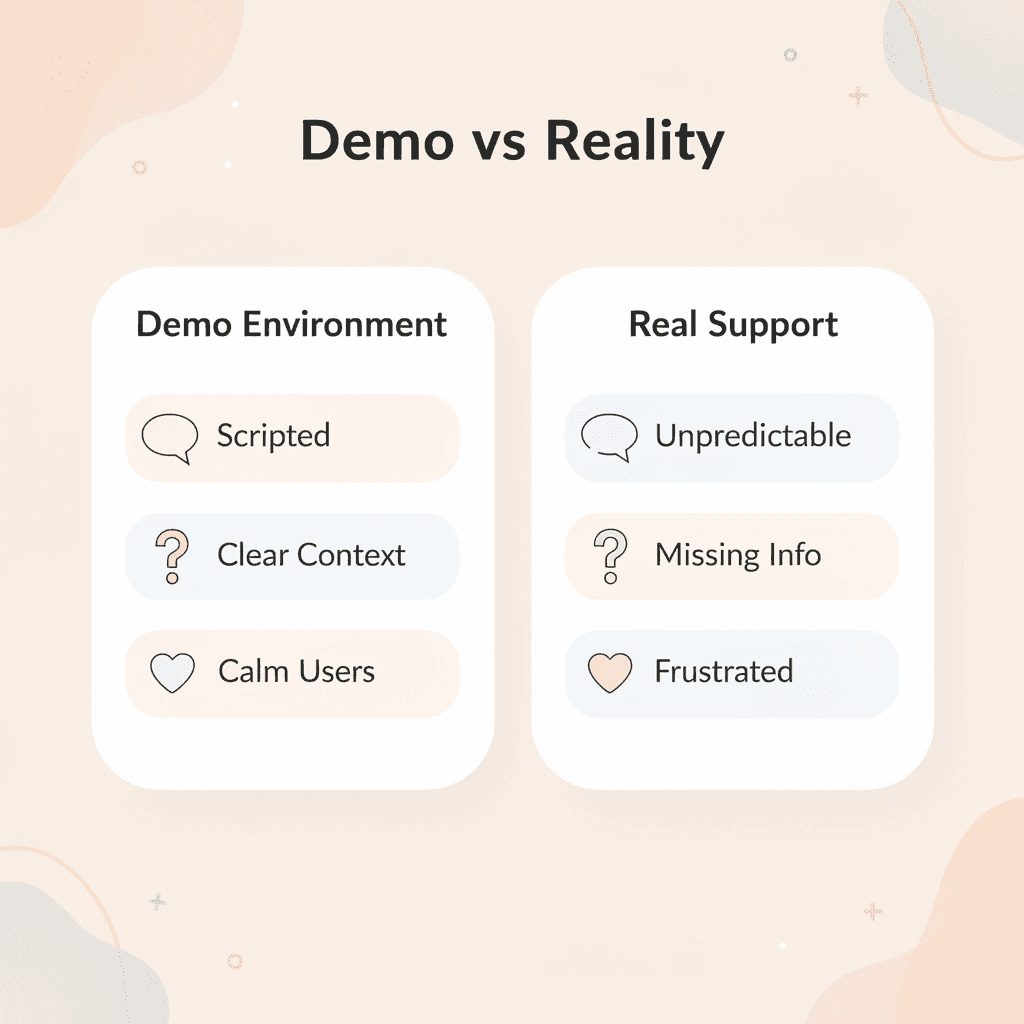

- Demo environment

- Scripted scenarios

- Clear, complete context provided

- Calm, logical customers

- Single-topic conversations

- Real customer support

- Unpredictable conversations

- Missing or incomplete information

- Frustrated or emotional users

- Multiple issues at the same time

⚠️ Warning: AI agents trained on perfect scenarios will consistently fail when customers deviate from expected conversation patterns, leading to escalated frustration and support ticket volume.

What causes the gap between testing and real-world performance?

The gap between controlled testing environments and actual support tickets is where 95% of enterprise AI implementations fail.

How widespread is AI investment in customer service?

According to a late 2023 Gartner survey, 83% of service leaders said their companies plan to invest in generative AI or are already doing so. Most organizations remain in early adoption stages: their AI performs well in testing but fails when real customers use it.

What happens when AI meets messy customer queries?

Demonstrations show AI handling clean queries like "What's my order status?" or "How do I reset my password?" Real customers don't work this way. They start with "I've been charged twice and nobody's helping me and I need this fixed NOW" while asking about a return policy and mentioning a problem from three months ago.

Why do AI systems struggle with emotional complexity?

The AI trained on clean datasets encounters emotional intensity, unclear pronouns, incomplete information, and requests spanning multiple systems. It pattern-matches keywords, finds "charged twice," and launches a billing script while ignoring urgency, history, and the actual request, buried in frustration. The customer repeats themselves. The AI repeats itself. Eight interactions later, nothing is resolved.

What causes conversational amnesia in AI systems?

Most implementations fail in predictable ways. Context disappears between messages because the system treats each interaction as separate, creating conversational amnesia. A customer explains their situation in message one, the AI asks a clarifying question, and by message three, it has forgotten the opening. This reflects a fundamental architectural problem: the absence of persistent memory to track what has been said, confirmed, or attempted.

Why do AI agents get stuck in reasoning loops?

The second failure point is reasoning capability. The AI doesn't think about what should happen next—it matches patterns and retrieves templates. When a customer says, "I don't have portal access," a reasoning system would update its model and change strategy. Instead, most agents detect the keyword "portal" and explain how to use it, creating loops that feel deliberately confusing, even though they mechanically follow pattern-matching logic.

How does limited execution capability affect customer outcomes?

Execution represents the third gap. The AI can explain what needs to happen, but cannot make it happen. It cannot check the customer's actual access status, retrieve their invoice from billing, or create the access request needed to resolve the problem. It generates text about actions rather than taking them, leaving customers with instructions that are unfollowable and unresolved problems.

How do deflection metrics mask actual customer service problems

A 75% deflection rate sounds impressive until you examine what's being deflected. The metric counts any interaction without human agent involvement, treating "customer gave up in frustration" identically to "customer's problem was solved." IBM Think projects that 90% of customer service interactions will be handled by AI agents by 2026, but that projection overlooks the gap between handling interactions and resolving them.

Teams optimize for response speed and coverage while ignoring resolution rate and escalation intelligence. The AI answers quickly, covers a wide range of topics, and maintains a professional tone while blocking customers from reaching solutions. It uses compute resources to prevent people from completing payments, reporting problems, or accessing services they've already purchased. The ROI calculation looks positive on deflection metrics while creating negative value in customer experience and actual business outcomes.

What architectural changes solve these fundamental issues

Modern conversational AI platforms solve these problems by maintaining organized context across conversations, using reasoning layers that plan responses based on what's happening, and connecting to systems that complete tasks rather than discuss them. The difference lies not in how sophisticated the language sounds but in how the system is built: problem-solving systems versus systems designed to sound coherent. Understanding why AI agents fail reveals patterns common to every project, and those patterns have names.

Related Reading

- How to Use AI in Customer Service

- How Does AI Improve Customer Service

- How Can Ai Help Customer Service

- AI in Customer Communications For Telecommunications

- How Is Ai Improving Restaurant Customer Service

- AI in Customer Communications in the Insurance Industry

- Ai Agent Challenge In Customer Support

- How Ai Is Changing Customer Service In Telecom

- Ai Appointment Scheduling Healthcare

- Benefits of AI in Customer Support

- Ai Customer Support Implementation Best Practices

- How To Use Ai In Customer Service

11 Key AI Agent Challenges in Customer Support Faced by Businesses During Deployment

When AI agents move from test programs into real use, serving thousands of daily interactions, testing limits becomes real failures. They create customer frustration, trigger compliance risks, and force manual support team intervention—precisely when automation should help.

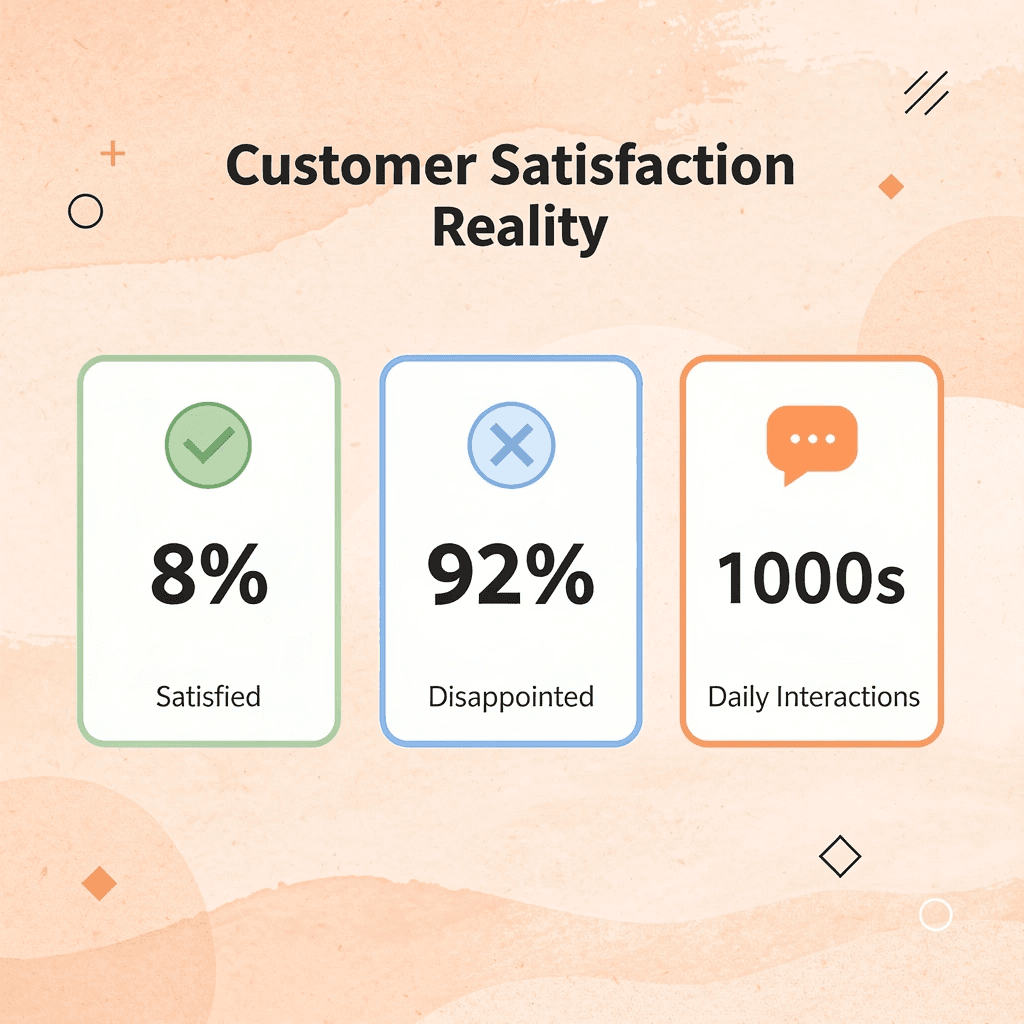

According to a McKinsey analysis of digital integration in customer service, only 8% of North American respondents report greater-than-expected satisfaction with their customer performance. This gap between promise and delivery explains why many companies roll back deployments or limit them to low-risk scenarios after initial trials.

"Only 8% of North American respondents report greater-than-expected satisfaction with their customer performance." — McKinsey Digital Integration Analysis

🔑 Key Takeaway: The 92% satisfaction gap reveals why most AI agent deployments fail to meet expectations despite successful pilot programs.

These failures cluster around specific architectural weaknesses, integration gaps, and organizational misalignments that every implementation encounters.

⚠️ Warning: Deployment failures aren't random. They follow predictable patterns that can be identified and mitigated before full-scale rollout.

1. Rigid Interactions That Feel Robotic

Customers want to feel understood, not merely receive answers. When AI agents respond with scripted language regardless of situation or tone, interactions feel robotic. A Zendesk CX Trends report found that 76% of customers expect personalized support, yet most AI systems rely on keyword matching and pre-written responses that ignore the customer's actual feelings.

How do rigid responses worsen customer frustration?

The problem worsens when customers show frustration. Agents unable to notice rising tension or adapt their communication create slower fixes and more work—the opposite of what automation promised. Overreliance on templates, poor contextual handling, and lack of emotional intelligence transform helpful automation into a barrier between customers and solutions.

2. Failing to Keep Up With Response Time Expectations

Ninety percent of consumers expect immediate responses to service questions on chat and messaging platforms. If your AI agent cannot handle multiple conversations simultaneously during busy hours or retrieve information faster than a person, customer satisfaction suffers. The system must grow efficiently, prioritize urgent questions correctly, and handle complex issues without slowdowns. Delta Air Lines connected Twitter and Facebook directly into their support system for real-time responses on channels where customers were active. By building strong feedback loops and channel integration, they achieved an 85% reduction in response times.

3. Weak Safeguards Around User Data

Every customer interaction processed by an AI agent involves personal information: account details, purchase history, payment data, and support tickets. Without real-time monitoring, access controls, or audit trails, the risk is significant. According to the Cisco 2024 Data Privacy Benchmark Study, 91% of organizations agree they must do more to reassure customers that their data is used only for intended and legitimate purposes in AI applications.

What happens when data protection fails?

The 2024 Ticketmaster breach demonstrates the consequences of failed safety systems. Attackers exploited a third-party cloud provider to access 1.3 terabytes of sensitive customer data, including credit card information. The breach remained undetected for seven weeks, affected over 40 million users, and triggered lawsuits and regulatory action. Weak monitoring and delayed response in automated systems erode the customer trust essential to support interactions.

4. Hidden Biases and Unintended Outcomes

AI agents trained on narrow or flawed datasets produce skewed responses that seem reasonable but lead to unfair outcomes. In sensitive interactions like refund decisions, escalation routing, or account access, even minor biases in how the system interprets customer language or prioritizes requests can trigger dissatisfaction that spreads quickly as customers compare experiences and notice patterns.

How do biases compound over time in AI systems?

Amazon's AI recruiting tool demonstrates how bias accumulates in machine learning systems. Trained on ten years of resumes predominantly from men, the system downgraded applications from women and ranked candidates from all-female colleges lower. Despite Amazon's attempts to correct the problem, the bias persisted, ultimately forcing the company to abandon the tool entirely. Customer support systems can inherit bias when agents learn from historical data reflecting past unfair treatment rather than optimal outcomes. Without careful review and ongoing monitoring, these biases remain hidden until they damage customer perception and brand reputation.

5. Poor Fit With Existing Tools and Systems

Most companies run on legacy databases, custom CRM platforms, and off-the-shelf software never designed to work together. When an AI agent cannot connect smoothly with these systems, it creates broken workflows: duplicate tickets, incomplete customer histories, routing errors, and teams spending more time fixing integration problems than serving customers. Patchwork integrations built through middleware or custom APIs drain IT resources. Every system update risks breaking connections; every new feature requires rework. Technical debt accumulates until maintaining the AI agent costs more than it delivers.

6. Employees Resisting the Shift

Adding automation to customer support can frighten support agents worried about job loss and managers overwhelmed by new tools. People resist when excluded from decisions or unable to see immediate benefits. Even the best technology fails or gets abandoned when perceived as a threat. Good change management demonstrates how automation handles repetitive questions, freeing agents to tackle complex problems requiring human judgment. Help teams understand that their skills become more valuable when routine work is automated. Without this foundation, internal resistance will derail the rollout before customers benefit.

7. Lack of Clear Ownership of Performance

Automation isn't a "set and forget" system. AI agents require constant tuning, feedback, and updates as customer needs and products evolve. Many companies fail to assign clear ownership for performance reviews, quality checks, or retraining protocols, resulting in stagnant performance where agents handle familiar questions well but never improve or adapt. Without clear ownership, accountability gaps emerge. When resolution times increase or satisfaction drops, no one knows who's responsible for fixing it. Quality deteriorates until the system becomes problematic, especially in complex situations where ongoing refinement determines success.

8. Choosing the Wrong Platform

Not all AI solutions grow with your business or integrate with your operations. Some lack critical features such as handling edge cases, supporting multiple languages, or connecting with existing tools. Others cannot adapt as your needs evolve. Adopting a tool without first verifying it meets your requirements leaves your team with a poor fit that requires replacement.

How can poor platform choices create legal and financial risks?

The 2024 Air Canada chatbot incident illustrates this risk. The bot provided incorrect information about bereavement refunds, leading to a denied claim and a legal complaint. A tribunal ruled that Air Canada was responsible and ordered a refund. When a platform lacks proper fit and oversight, it can cause legal, financial, and reputational damage, forcing companies to discontinue the chatbot and limiting future adoption.

9. Steep Learning Curve for Enterprise Teams

Well-designed AI platforms require time to learn. Operations teams, support staff, and engineers struggle without adequate documentation, training, and clear internal ownership. This delays adoption and reduces productivity, particularly when teams are already managing existing workloads. Teams need to learn how to change conversation flows, update knowledge bases, monitor performance metrics, and fix problems when things break. Without these skills, the AI agent remains dependent on the vendor for basic changes, creating bottlenecks that prevent the system from scaling and adapting to business needs.

10. Not Budgeting Enough for Scale

Pilots work in controlled conditions with limited scope. Rolling out across multiple regions, languages, or product lines exposes resource constraints: engineering support, computing infrastructure, and licensing costs scale faster than expected. According to Kissflow, 37% of automation projects fail due to unbudgeted implementation costs.

What happens when scaling costs are underestimated?

Mission Produce's 2021 ERP rollout exemplifies this pattern. The project aimed to support global growth but cost nearly $4 million more than planned due to underestimated complexity. The company lost visibility of key metrics, reverted to manual processes, and faced a nine-month delay. Not planning for growth completely stops automation efforts, forcing teams to choose between abandoning the project or diverting resources from other important work.

How can teams identify scaling challenges early?

Teams using platforms like conversational AI find that live demonstrations expose scaling challenges before full deployment. When decision-makers interact with the system in realistic scenarios, they understand infrastructure requirements, integration complexity, and training needs that abstract specifications cannot reveal.

11. Difficulty in Measuring Real Impact

Many teams launch AI agents without clear frameworks for tracking performance, leading to confusion about whether the system improves resolution times, deflects tickets, or enhances customer satisfaction. Without defined metrics and ownership, automation investments lack accountability. The measurement challenge extends beyond counting numbers. Teams need to understand which types of questions the agent handles well, where it fails consistently, how customers feel about interactions, and whether human agents spend less time on routine issues. Without this visibility, you cannot identify opportunities for improvement or justify continued investment. Solving these challenges requires rethinking how AI agents are designed, integrated, and managed throughout their lifecycle.

Related Reading

- Best Ai Customer Support Tools For It Teams

- Best Ai Customer Service For Hotels

- How To Improve Call Center Customer Service

- It Helpdesk Automation

- Best Voice Ai For Restaurants

- Ai Customer Support Automation In Telecom

- How To Use Ai For Customer Service Call Center

- Best Ai Tools For Customer Support In Healthcare

- Patient Support Services Automation

- How To Use Ai To Improve Customer Service Kpis

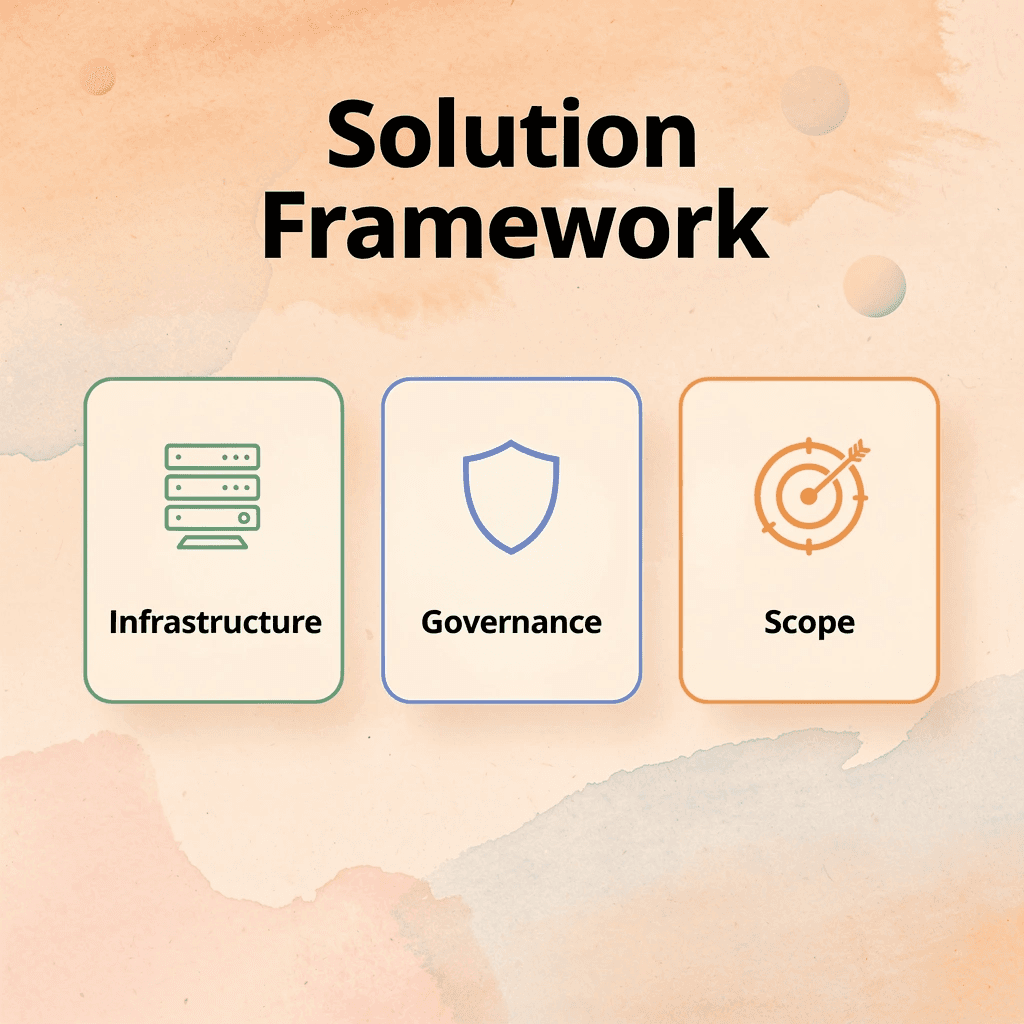

How to Overcome AI Agent Challenges and Deploy Reliable Support Systems

Successful AI agent deployment depends on infrastructure, governance, and scope management, not model sophistication. The difference between agents that fail and those that scale comes down to system design.

🎯 Key Point: The most sophisticated AI models will fail without proper infrastructure foundations and clear governance frameworks. Focus on system architecture before optimizing model performance.

"The difference between agents that fail and those that scale comes down to system design and operational readiness, not just model capabilities." — AI Implementation Research, 2024

Key challenges and how to address them

- Infrastructure

- Solution approach: Scalable architecture

- Key focus: Performance monitoring

- Governance

- Solution approach: Clear protocols

- Key focus: Decision boundaries

- Scope Management

- Solution approach: Defined limitations

- Key focus: Gradual expansion

⚠️ Warning: Many organizations rush to deploy advanced AI agents without establishing proper monitoring systems or fallback procedures. This approach inevitably leads to system failures and user frustration.

Design escalation as a core system function

Agents must recognize their capability boundaries and transfer context to humans without forcing customers to repeat themselves. According to LinkedIn research by Saboor Ahmed, 80% of AI agent projects fail to move beyond the pilot stage because escalation pathways weren't designed as fundamental architecture from day one. Build routing logic that triggers when uncertainty thresholds, sentiment flags, and query complexity scores are met. Handoffs should include full conversation history, detected intent, and attempted solutions so human agents start with context.

Ground AI in real support data

Hallucination rates drop when agents draw on verified knowledge bases rather than generating answers from training data alone. Connect your AI to your product documentation, support ticket resolutions, and FAQ databases. Retrieval-augmented generation (RAG) architectures let agents cite specific sources, keeping answers current as your product evolves. One team reduced incorrect responses by 60% after switching to a retrieval-first design because the system stopped inventing solutions and started referencing real resolution patterns from past tickets.

Integrate deeply with CRM and ticketing systems

Knowing what happened in past interactions prevents customers from repeating information. When agents can see previous purchases, open support tickets, past conversations, and account details before responding, they provide more helpful answers than generic ones. Tools like conversational AI integrate with company systems to track conversations across channels, so a customer who starts chatting and switches to a phone call loses no information during transfer. This seamless handoff transforms AI from a first-line filter into a genuine support tool.

Limit AI scope based on complexity tiers

Organize support requests by difficulty level. Route simple, common questions—password resets, order tracking, and account updates—to automated agents. Route complex issues—billing disputes, technical troubleshooting, and account escalations—to human teams immediately. Clear boundaries prevent agents from attempting tasks beyond their capabilities, ensuring reliable answers and predictable operational costs.

Continuously audit failure cases

Track every escalation, "I want to speak to a human" request, and conversation where sentiment turned negative. These failure cases reveal gaps in training data, prompt design, or knowledge base. Monthly audits of misrouted queries, incorrect answers, and abandoned conversations create a roadmap for improvements. One support team discovered that their agent failed on return-policy questions for products purchased over 30 days ago because the knowledge base covered only standard returns, leaving edge cases that accounted for 15% of actual inquiries.

Reliable AI agents aren't autonomous replacements; they're tightly governed systems embedded into support infrastructure, designed to handle specific tasks within defined boundaries while knowing when to escalate. But even a perfect system design cannot save an agent if underlying failure modes aren't understood.

Where do most AI agent failures actually occur?

- User interactions

- Emotional intelligence

- Complexity management

- Knowledge retrieval

- System integration

But here's what most teams miss: the failures aren't where you think they are.

Related Reading

- Best Ai Voice Agents For Insurance

- Relevance Ai Alternative

- Best Ai Tools For Insurance Customer Service

- Lindy Ai Vs Zapier

- Botpress Alternative

- How Is Ai Helping In The Healthcare Industry

- Lindy Ai Alternative

- Best Ai For Insurance Agents

AI Agent Failures in Customer Support Are Usually System Failures, Not Model Failures

Real failures happen in the space between the AI and the support infrastructure it operates within. When agents lack access to live CRM data, cannot trigger escalation workflows, or respond without understanding ticket history, the model itself might be working perfectly. The system around it breaks down, manifesting as poor customer experience but rooted in architecture, not algorithms.

Most teams layer AI on top of existing support tools without rethinking how those tools communicate. The agent becomes isolated: it can answer questions but can't update records, route complex issues intelligently, or maintain context across channels. As volume increases, these disconnected systems create more manual work than they eliminate. Support teams spend time fixing what the AI couldn't access rather than solving what it couldn't understand.

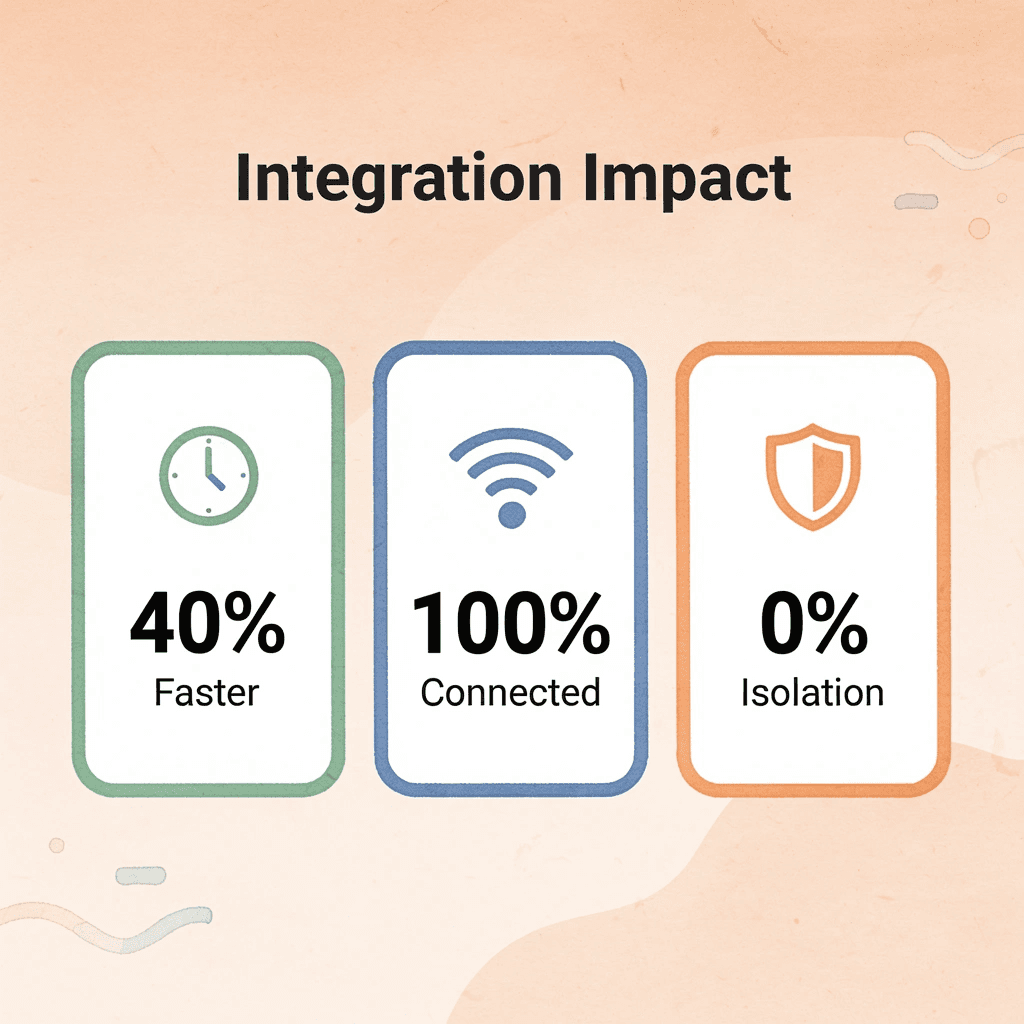

"Teams report resolution times dropping by 40% or more when the AI can actually act on what it learns." — Pylon Customer Support Guide, 2024

Modern support infrastructure is shifting toward fully integrated conversational systems that operate inside existing workflows. Our conversational AI deploys real-time voice agents that maintain conversation history, trigger escalations based on sentiment and complexity thresholds, and automatically update support systems. Teams report resolution times dropping by 40% or more when the AI can act on what it learns.

🔑 Key Takeaway: The difference between AI success and AI failure in customer support isn't about the intelligence of the model—it's about how well the entire system is designed to let that intelligence actually work.

Instead of repeating information across channels or waiting while agents manually search for context, customers receive responses reflecting their full history and emotional state. The AI knows when to solve, when to route, and when to preserve details for the human taking over. This consistency requires a system design built for it from the start.

⚠️ Warning: Layering AI agents onto disconnected support tools without integration creates more manual work, not less. The AI becomes isolated and can't access the real-time data it needs to provide effective support.