A customer reaches out at 2 AM with an urgent question and receives an accurate, helpful response in seconds rather than waiting until morning. How to use AI in customer service transforms this scenario from wishful thinking into an everyday reality. The real challenge isn't just implementing the technology but doing so in a way that speeds up responses while maintaining customer trust and preventing issues from escalating to human agents. Success requires practical strategies that integrate AI without sacrificing the quality customers expect.

Modern AI solutions understand context, adapt to different situations, and handle complex queries while knowing when to involve human team members. This approach delivers faster resolution times and creates positive experiences where customers feel heard rather than processed. The result is a customer service operation that operates efficiently around the clock without compromising on quality or authenticity. Businesses looking to implement this balanced approach can explore Bland's conversational AI solutions.

Summary

- Customer frustration with repetition costs more than time. According to Zendesk, 89% of customers get frustrated because they need to repeat their issues to multiple representatives, and when resolution requires three interactions instead of one, companies spend $24 per customer while delivering what feels like failure. The repetition tax isn't about agent skill. It's about information that doesn't follow the customer through their journey, turning every handoff into a restart that burns trust and multiplies cost.

- Failed AI implementations happen because teams automate broken processes instead of redesigning for how the technology actually works. Chatbase research found that 70% of AI customer service implementations fail to meet expectations, usually because companies layer automation on top of existing inefficiencies, such as rigid scripts and unnecessary verification steps. The system inherits every flaw from the old model, then adds new failure modes. Customers experience the same friction delivered faster; handling times remain long, and promised cost savings never materialize because the AI executes fundamentally inefficient processes at scale.

- Training data quality determines AI performance more than the technology itself. When companies feed systems outdated knowledge base articles, chat logs full of agents' mistakes, or FAQ documents that answer questions nobody asks, the AI learns to replicate past errors with perfect consistency. According to Qualtrics, AI-powered customer service fails at a rate four times that of other tasks, largely because the gap between what customers need and what the system was trained to do becomes apparent within seconds of a real conversation. Someone asks about last month's policy change and receives the old answer because that's what the training data used.

- Small businesses see results when they automate predictable volume, not when they solve complex problems. JPMorgan Chase Institute reports that 22% of small businesses currently use AI, and those achieving efficiency gains share a pattern: they route repeatable queries, such as order status checks and password resets, to automation while keeping humans focused on damaged-shipment claims and custom solutions that require judgment. Handle time for routine requests drops 60 to 70%, but this only works when teams have already documented common issues, defined escalation paths, and identified which requests follow consistent patterns versus which need human nuance.

- Testing against real scenarios prevents costly deployment failures. Generic demos show perfect conditions with clean data and simple questions, but actual customers interrupt mid-sentence, provide incomplete details, and describe problems using terminology that doesn't match documentation. One financial services team discovered that their AI handled account balance inquiries flawlessly but failed on fraud disputes because the training data lacked examples of emotionally charged conversations that require empathy and urgency. Running fifty test calls covering your full range of scenarios reveals whether workflows translate to automation or need restructuring first.

- Conversational AI addresses this by maintaining context across channels and recognizing when complexity signals require human escalation, so customers never hit a wall where the system can't help but won't transfer them to someone who can.

Why Your Customer Service Is Slower and More Expensive Than It Should Be

Your support team works harder than ever, yet customers wait longer for answers, and your per-interaction cost rises. The problem isn't effort or intent: traditional customer service models weren't built for today's volume and complexity. Adding more people to scale creates new problems instead of solving the old ones.

🎯 Key Point: The fundamental issue isn't your team's performance—it's that outdated service models can't handle modern customer expectations and volume demands.

"Traditional scaling methods in customer service often create more bottlenecks rather than solving existing efficiency problems." — Customer Service Operations Research, 2024

⚠️ Warning: Simply hiring more agents without addressing the underlying system inefficiencies will only multiply costs while maintaining the same slow response times.

Why do customers get frustrated with repeated explanations?

According to Zendesk, 89% of customers get frustrated when forced to repeat issues to multiple representatives. A customer calls in, explains their problem to the first agent, gets transferred, and repeats everything to the second agent, then possibly a third. Each handoff wastes time, erodes trust, and increases the cost of resolution. Your team isn't inefficient due to poor training: information simply doesn't follow the customer through the journey.

How does lost context impact service costs?

The same pattern appears in email and chat: agents lack information from past conversations and ask questions that customers have already answered. Each time a customer repeats an answer, handle time increases and costs rise. Research from Forrester shows the average cost per customer service interaction is $8.01. When customers need three interactions to resolve one problem because information is lost between agents, you're spending $24 while the customer experiences failure.

When Volume Breaks the System

Most teams handle growth by hiring more agents and extending hours. More people should mean faster responses, but coordination overhead increases faster than capacity. New agents require training, quality becomes inconsistent, and scheduling grows complex. A manager overseeing eight people now manages twenty, making ownership unclear.

Peak periods expose this weakness immediately. Black Friday triples ticket volume, pushing response times from two hours to two days. Temporary staff lack product knowledge and create follow-up tickets. Cost per resolution spikes precisely when revenue matters most, leading to higher spend to deliver worse experiences at moments that shape customer perception.

Why do customers get more frustrated with automated support?

Here's what keeps happening across industries: companies invest in knowledge bases, chatbots, and self-service portals to reduce support tickets. Customers frustrated by rigid scripts and unhelpful suggestions then contact a human agent anyway, now annoyed and less patient. Support teams handle the same number of interactions, but every conversation starts with customer frustration.

What makes generic chatbots fail at understanding customer intent

The failure point is usually specificity. Generic chatbots recognize keywords, not intent. A customer asking, "Why was I charged twice?" might mean a duplicate transaction, a misunderstood subscription renewal, or confusion about a pending authorization. Traditional automation treats these as identical. A human agent knows they're distinct problems requiring different solutions.

How does conversational AI handle complexity differently?

Solutions like conversational AI handle complexity by understanding context and adapting responses in real time. Rather than routing customers through decision trees, the technology processes natural language, asks clarifying questions, and resolves issues without forcing customers to repeat themselves. Teams using our conversational AI experience shorter handle times and improved customer satisfaction scores. But knowing automation exists doesn't explain why so many implementations fail to deliver results.

Related Reading

- Benefits Of Ai In Customer Support

- Ai Agent Challenge In Customer Support

- Ai Customer Support Automation In Banking

- Ai In Customer Communications For Telecommunications

- How Ai Is Changing Customer Service In Telecom

- How Does Ai Improve Customer Service

- How Is Ai Improving Restaurant Customer Service

- How Can Ai Help Customer Service

- Ai In Customer Communications In The Insurance Industry

- Ai Appointment Scheduling Healthcare

- Ai Customer Support Implementation Best Practices

Why Most AI Customer Service Implementations Fail (And What It Is Costing You)

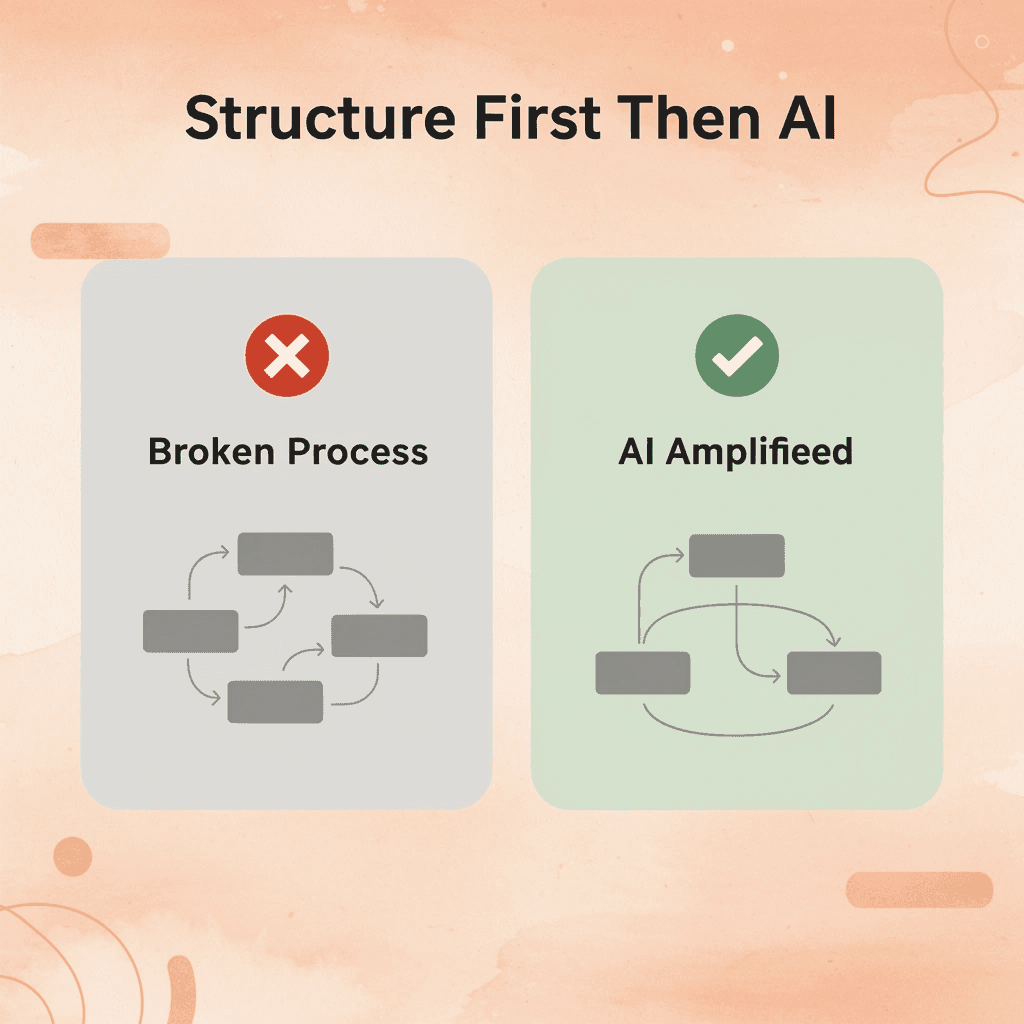

Why do companies automate broken workflows?

Most companies deploy AI to customer service without evaluating whether their current workflows function effectively. When existing processes force customers through five menu options before reaching help, adding AI merely automates the confusion. The system perpetuates every problem from the old model while introducing new failure modes.

What happens when AI executes inefficient processes?

Chatbase research found that 70% of AI customer service implementations fail to meet expectations because teams automate the status quo rather than redesign for how AI works. A retail company might train its voice AI on the same rigid script human agents were forced to read, including unnecessary verification steps and redundant questions. Customers experience friction when a system cannot recognize when the script doesn't fit the situation. Handle times remain long, satisfaction scores drop, and cost savings fail to materialize because the AI executes an inefficient process at scale.

What happens when AI learns from poor-quality data?

AI performs only as well as the information it learns from. When companies rush to deploy AI, they feed the system whatever data is available: old chat logs full of agent mistakes, outdated knowledge base articles, or FAQ documents answering obsolete questions. The AI learns to replicate outdated information and past errors with perfect consistency.

How quickly do training data problems become apparent?

The consequences appear immediately. A customer asking about a policy change from last month receives outdated information. Another, describing a problem in terms different from those in the knowledge base, is transferred to a human. According to Qualtrics, AI-powered customer service fails at a rate four times that of other tasks, primarily because the gap between customer needs and system training becomes evident within seconds of the first conversation.

What happens when automation removes human oversight?

Too much automation creates a different problem: the system handles too much without noticing when something is wrong. Companies disable human override paths to save money, then discover their AI happily gives wrong shipping dates, processes rule-breaking returns, or promises refunds the company cannot afford. There's no backup plan when the AI misunderstands what's happening, and customers cannot reach someone with the power to override the system.

How do smart escalation systems prevent automation failures?

Teams using conversational AI built for enterprise contexts maintain intelligent escalation logic that recognizes complexity signals. When a customer's tone shifts to frustration, when a request falls outside normal parameters, or when the confidence score drops below the threshold, the system routes to a human agent with full context already transferred. The AI handles volume, humans handle nuance, and customers never hit a wall where the system can't help but won't let them leave.

What happens when AI customer service fails

Failed implementations waste more than the budget. Research from AbroadWorks shows 73% of customers will switch to a competitor after multiple bad experiences, and AI that gives wrong answers or traps people in loops constitutes a bad experience each time. Support ticket volume doesn't decrease: the AI creates follow-up contacts when it fails to solve issues on the first attempt. Agents spend time fixing AI mistakes instead of handling complex problems that require human judgment.

How does poor AI implementation damage your brand?

Brand damage builds up slowly. Customers stop reaching out after they learn your automated system won't help them, so they either accept the problem or switch to competitors. Ticket volume drops, and teams declare victory, not realizing they've taught customers that contacting support is pointless. The AI was supposed to improve outcomes, but instead, it reduces revenue while teams celebrate lower call center costs. The technology itself doesn't determine whether something works or fails.

How to Use AI in Customer Service the Right Way (Step-by-Step Framework)

Voice AI in Contact Centers

Voice AI handles first inquiries without phone trees or wait times. The technology understands natural speech, processes requests immediately, and resolves common issues such as order status checks, appointment scheduling, and account updates. When a customer calls about a delayed shipment, the system accesses order data, identifies the carrier delay, provides the updated delivery window, and offers to send tracking details via text—completing the interaction in ninety seconds instead of the eight-minute average. Salesforce reports that 83% of service professionals say AI and automation tools help them serve their clients.

How do you implement voice AI in your contact center?

Start by identifying your highest-volume, lowest-complexity call types. Pull three months of call center data and categorize by reason code. Password resets, balance inquiries, store hours, and return policy questions typically account for 40-60% of inbound volume. Route these to voice AI first while keeping human agents available for escalation. Monitor call completion rates and customer satisfaction scores weekly for the first month, adjusting conversation flows based on where customers get stuck or request a transfer.

How do AI agents maintain context across different communication channels?

AI agents manage interactions across phone, chat, email, and help portals, understanding context and adapting responses based on customer history. A customer who chatted about a billing discrepancy can email with a follow-up question, and the AI agent connects both interactions without requiring repetition. According to The State of AI in Customer Service 2025, 73% of customers expect companies to understand their unique needs and expectations, which requires systems that maintain context across touchpoints.

What's the best approach for deploying multi-channel AI agents?

Teams using conversational AI built for enterprise deployment achieve this continuity automatically through unified customer profiles that update in real time. When someone switches from chat to phone mid-issue, the voice agent already knows what happened in the chat session. Deploy by connecting your CRM and ticketing system first, then enabling one channel at a time. Start with chat, validate that handoffs to human agents include full context transfer, then expand to voice and email once stable.

What does automated case summarization capture?

Case summaries capture what happened, what was recommended, and how the issue was resolved without requiring agents to spend fifteen minutes writing notes after each interaction. The AI extracts key details such as the customer account number, problem description, troubleshooting steps attempted, and final outcome, then generates organized summaries that populate your ticketing system automatically. When a supervisor reviews escalations or another agent picks up a follow-up ticket, they see exactly what occurred without having to read through full conversation logs.

How do you set up automated case summarization?

Start by creating your summary template. Identify which information matters for reporting and quality checks: customer sentiment, product, resolution time, and escalation status. Set up the AI to consistently fill in these fields. Then, validate accuracy by comparing AI-generated summaries with agent notes over two weeks. Update the template when it's missing information, like warranty details or next steps. Once accuracy hits 95%, use AI summaries as the standard and let your agents spend their time talking to customers and solving problems instead of writing everything down.

How does intelligent ticket triaging work?

Triaging sorts incoming tickets by urgency, routes them to the appropriate team, and flags risks to response time agreements. The system reads ticket content, checks customer type and contract terms, identifies the required support based on the product mentioned, and assigns the ticket to the agent best suited to resolve it quickly. A billing question from an enterprise customer with a four-hour response time agreement goes directly to your senior billing specialist. A general product question from a free-tier user goes to your tier-one team with standard priority.

How do you configure and optimize ticket routing?

Set up the system by mapping your current routing rules into the AI: Which keywords indicate billing versus technical issues? What customer groups receive priority treatment? Which agents handle which product lines? The AI learns these patterns and catches edge cases that humans miss, such as tickets that mention both billing and technical issues requiring specialized handling.

Watch for misrouted tickets during the first month and send corrections back into the system. After the learning period, routing accuracy typically exceeds manual assignment because the AI processes every signal consistently rather than relying on whoever reads the ticket first.

How do QA systems evaluate agent performance?

QA systems evaluate agent performance by assessing response accuracy, tone, adherence to company policies, and problem resolution across customer conversations. Rather than supervisors manually reviewing 2% of tickets, AI scores 100% and flags outliers for human review. An agent who provides incorrect shipping policy information gets caught within days instead of months. Teams with rising average ticket handling times get flagged for coaching before customer satisfaction drops.

How do you implement quality scoring effectively?

Start by defining your quality rubric: What makes a good response in your context? Consider criteria such as accurate information, empathetic tone, clear next steps, and proper grammar. Weight each criterion based on what matters most for your brand.

Run the AI against historical tickets where you already know the quality outcome, adjusting scoring thresholds until the system's ratings match supervisor judgment. Once calibrated, use it to identify training opportunities and celebrate top performers. Quality issues that previously took months to surface via random sampling now appear on your dashboard within 48 hours. But knowing which tools exist doesn't explain whether smaller teams can implement them without enterprise budgets and technical resources.

Related Reading

- Ai Customer Support Automation In Telecom

- Best Ai Customer Support Tools For It Teams

- It Helpdesk Automation

- How To Use Ai To Improve Customer Service Kpis

- Best Ai Tools For Customer Support In Healthcare

- Patient Support Services Automation

- Best Voice Ai For Restaurants

- Best Ai Customer Service For Hotels

- How To Use Ai For Customer Service Call Center

- How To Improve Call Center Customer Service

Can Small Businesses Effectively Implement AI in Their Customer Service Operations?

Yes, but only if you already have a working customer service system in place. AI makes what works bigger; it doesn't fix what's broken. Small businesses fail with AI when they treat it as a replacement for structure rather than a tool that needs structure to work. Without written common issues, clear workflows, or defined escalation paths, AI just automates chaos faster.

🎯 Key Point: AI amplifies existing systems—it won't create the foundational processes your customer service needs to succeed.

"AI makes what works bigger—it doesn't fix what's broken. Without structure, AI just automates chaos faster."

⚠️ Warning: Don't use AI as a band-aid for broken customer service processes. Fix your workflows first, then let AI scale what's already working.

What conditions make AI effective for small teams?

AI delivers results when three conditions exist: repeatable customer queries, categorized support requests, and clear rules for human escalation. A small e-commerce company handling 50 "Where's my order?" calls per day can route them to voice AI, as the question follows a pattern: retrieve the order number, check the shipping status, and provide a tracking link.

Response time drops from eight minutes to ninety seconds, and your two support agents focus on damaged shipment claims that require judgment and empathy. According to JPMorgan Chase Institute, 22% of small businesses are currently using AI, and those seeing efficiency gains automate predictable volume rather than complex problem-solving.

How does automating routine requests free up team capacity?

When AI handles password resets, store hours questions, and return policy inquiries, your team stops answering the same fifteen questions two hundred times per week. Handle time for routine requests drops by 60-70%, freeing capacity for interactions that build customer relationships.

What happens when support processes are inconsistent?

AI fails when support is inconsistent, undocumented, or handled differently each time. If your team answers billing questions differently depending on who picks up the phone, the AI learns conflicting information and confidently gives incorrect answers. If you haven't organized your support tickets because every customer is different, the system cannot identify patterns to automate.

One team deployed a chatbot without first documenting their product's troubleshooting steps. The bot couldn't guide customers through basic fixes because there was no standardized process, and ticket volume increased as frustrated customers bypassed the bot and demanded human help.

Why does replacing human support entirely backfire?

Failure accelerates when businesses replace human support entirely instead of improving it. AI that cannot escalate complex issues to a person traps customers in loops where they repeat information without resolution. A customer calls about a billing error caused by a system glitch and reaches a voice AI trained only on standard billing questions. The AI doesn't recognise the unusual situation, can't override the charge, and won't transfer to an authorized person. The customer switches to a competitor. You saved four dollars in support costs and lost a customer worth two thousand dollars annually.

How does AI handle task substitution effectively?

AI replaces tasks, not teams. It handles "What are your hours?" and "Can I change my delivery address?" so humans can focus on "Your driver damaged my fence" and "I need a custom solution for my situation." When you substitute AI for judgment, you lose the ability to recognise when a standard answer doesn't fit. A customer asking about a refund might signal they're about to leave, but an AI optimized for transaction speed misses the emotional context a skilled agent would catch.

What makes enterprise conversational AI solutions effective?

Solutions like conversational AI, built for enterprise deployment, use natural language to understand customer needs, then route to humans when confidence drops or complexity signals appear. Our system handles large volumes of requests while preserving the escape hatch customers need when their problem doesn't fit the pattern. Small businesses using this approach see costs drop on predictable requests while satisfaction scores stay high because customers never hit a wall where the system cannot help but will not let them leave.

What questions should you ask before implementing AI?

Before using AI, answer three questions: Do we have questions that repeat and follow the same patterns? Do we have written instructions for solving common problems? Do we know what specific situations require human decision-making? If any answer is no, build the system first.

How do you build the foundation for AI automation?

Write down your top twenty support requests and how your best agent solves each one. Create decision trees showing when to escalate a request. Define what "complex" means for your business: a dollar threshold, customer sentiment level, or need for policy exceptions. With this structure in place, AI can handle more requests. Without it, you're automating a mess. Customer interactions reveal whether your system is ready or needs an important structure.

Related Reading

- How Is Ai Helping In The Healthcare Industry

- Botpress Alternative

- Lindy Ai Vs Zapier

- Best Ai Voice Agents For Insurance

- Best Ai Tools For Insurance Customer Service

- Relevance Ai Alternative

- Lindy Ai Alternative

- Best Ai For Insurance Agents

See How AI Handles Your Customer Calls Before You Replace Your System

Putting AI across your entire support operation without testing it first is the biggest mistake. You need to watch it handle real situations, identify where it works well and where it fails, and understand when a human needs to take over. Guessing costs you customer trust and operational credibility that takes months to rebuild.

🎯 Key Point: Never deploy AI support without comprehensive testing on your actual call scenarios first.

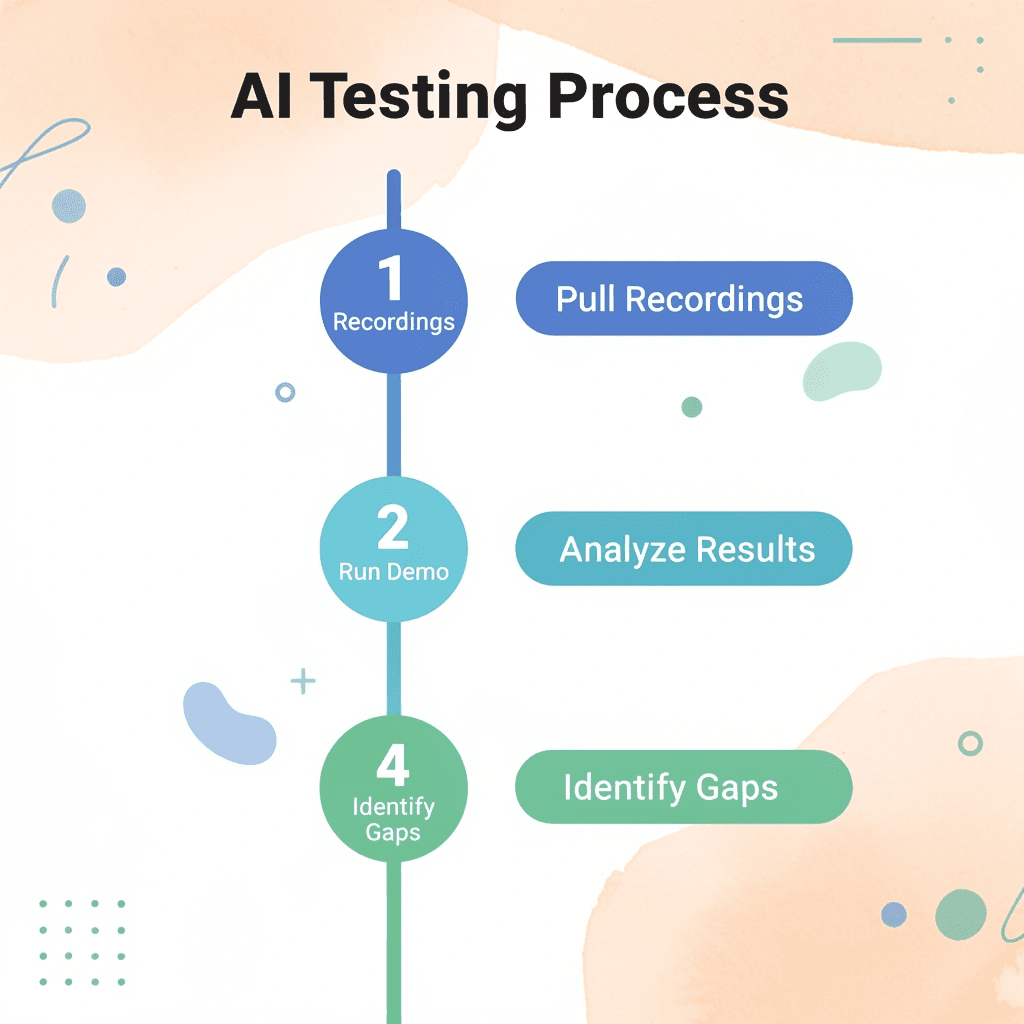

Pull recordings of your ten most common support calls and five most complex edge cases. Run them through a live demo environment to see how the AI understands questions, finds information, and structures responses. Does it recognize frustration and change its tone? Can it accurately access your knowledge base, or does it fabricate policies? Does it know when to escalate, or does it confidently give wrong answers? These answers emerge only when you test against your reality, not vendor promises.

"Testing AI against real customer scenarios reveals critical gaps that vendor demos never show - from tone recognition to accurate policy retrieval."

⚠️ Warning: AI that confidently provides incorrect information can damage customer relationships faster than no AI at all.

Where Most Demos Hide the Truth

Generic demonstrations show perfect scenarios with clean data and simple questions. Your customers interrupt mid-sentence, provide incomplete account details, and use words that don't match your documentation. A demo that proves the technology works under ideal conditions reveals nothing about whether it survives your Monday morning call queue when half your customers are angry, and the other half can't explain what they need.

Request access to test your own call flows with actual scripts, policies, and edge cases. Watch what happens when the AI encounters a customer who qualifies for an exception, needs information from two different systems, or asks about a product feature that launched last week. If the vendor won't let you test real complexity, they're protecting you from seeing failure modes that will appear in production.

Build Confidence Through Repetition

Run fifty test calls covering your full range of scenarios. Track completion rate, accuracy of information provided, appropriateness of escalations, and whether customers accomplished their goal without frustration. This identifies patterns in where the system needs refinement before reaching actual customers. One financial services team discovered that their AI performed flawlessly on account balance inquiries but failed entirely on fraud disputes because the training data lacked examples of emotionally charged conversations that require empathy and urgency.

Testing determines whether your workflows translate into automation or require restructuring first. When the AI struggles consistently with a particular request type, the problem might not be the technology: your current process may be too inconsistent or poorly documented for any system to replicate reliably.

Platforms like conversational AI let you test live call handling in minutes rather than months, showing how voice AI responds to your customers in real time. You can adjust conversation flows based on what breaks and validate that escalation logic works before full deployment. The test phase proves you've structured your support system so AI can scale effectively without creating new problems that cost more than the efficiency gained.