Customer service teams often struggle with repetitive inquiries while valuable customers wait for assistance. Implementing AI customer support systems can dramatically reduce response times and improve satisfaction, but success depends on following proven strategies rather than vendor promises. The right approach frees human agents to focus on complex issues that truly require personal attention.

Effective AI implementation requires understanding how to balance automation with human oversight while maintaining service quality. Companies that master these fundamentals see measurable improvements in efficiency and customer experience. Bland's conversational AI platform helps businesses automate customer interactions while preserving the personalization customers expect.

Summary

- Most AI chatbot implementations collapse within the first year, with Gartner Research showing a 70% failure rate. The pattern is consistent: companies deploy AI pointed at help centers, measure deflection rates, and declare success, only to watch CSAT scores drop within three months as customers start bypassing the bot entirely. The core problem is not the technology itself but the failure to redesign broken workflows before automating them. AI layered onto unclear escalation rules, incomplete documentation, and missing system integrations simply delivers the same broken experience faster.

- Forrester found that 85% of AI support failures stem from poor knowledge management and a lack of human handoff protocols. Without explicit escalation rules, bots default to trying harder rather than stepping aside, trapping customers in loops where they rephrase the same unhelpful answer or ask clarifying questions that feel like interrogation. The bot is not failing because it lacks intelligence. It fails because nobody defined when it should stop trying and transfer to a human with a full conversation context.

- A bad customer experience could cost companies $3.8 trillion in 2025, according to CX Network, yet most ROI models still measure success purely by deflection rates and headcount reduction. The math reveals the trap: while a 5% improvement in retention can boost profits by 25% to 95%, a support experience that increases churn by even a few percentage points wipes out any cost savings the AI generated. Deflection is not resolution, and when 75% of consumers report feeling frustrated by AI customer service, that unresolved demand either churns silently or creates negative word-of-mouth that damages long-term revenue.

- Data privacy is now the number one consumer concern with AI-powered services, with 53% of consumers citing it as their top worry (up 8 points year over year, according to PwC's 2025 CX Survey). When customers distrust AI with their information, they either avoid support channels entirely or provide minimal context, which makes the AI less effective. This creates a self-reinforcing downward spiral where reduced engagement leads to worse AI performance, which further erodes trust and turns a fixable technical issue into permanent brand damage.

- The strongest AI implementations treat training as ongoing operational infrastructure rather than a one-time deployment project. Teams that train on real support tickets, internal SOPs, and structured Q&A pairs built from actual conversation data consistently outperform those relying solely on help center articles. The gap between what support teams think customers ask about and what customers actually ask about is where most failures occur, and platforms that ingest multiple data sources while applying optimization layers deliver responses that actually resolve conversations rather than just retrieve information.

- Conversational AI addresses this by handling live voice and chat interactions, maintaining context across channels, detecting customer sentiment in real time, and escalating naturally when complexity exceeds the trained scope, while passing the full conversation history to human agents.

Why Most AI Customer Support Implementations Fail Before They Even Scale

Many people assume that AI customer support works like any other software upgrade: set it up, deploy it, and watch it improve. That's why most companies that try it fail within a few months.

🎯 Key Point: AI customer support isn't a plug-and-play solution—it requires ongoing optimization, continuous training, and strategic implementation to deliver the customer experience your business needs.

"Most companies that try AI customer support fail within a few months because they treat it like a traditional software upgrade rather than a strategic transformation."

⚠️ Warning: The biggest mistake companies make is assuming AI systems will automatically improve without human oversight, data refinement, and process integration—leading to poor customer experiences and failed implementations.

Why does AI inherit existing workflow problems?

When AI is added to existing support workflows without redesigning those workflows, it inherits every broken handoff, documentation gap, and unclear escalation rule that already existed. The bot becomes a faster way to deliver the same broken experience.

What do the failure statistics reveal about implementation approaches?

According to Gartner Research, 70% of AI chatbot implementations fail within the first year. Companies point AI at their help center, connect it to a chat widget, and measure deflection rates. Three months later, CSAT scores drop. Six months in, customers bypass the bot entirely, typing "agent" as their first message. The AI did not reduce workload; it added a layer of frustration that customers must navigate before reaching someone who can help.

What escalation logic failures cause AI support to break down?

The failure starts with escalation logic, or the absence of it. Most implementations treat escalation as an afterthought: "If the bot can't answer, send them to a human." But what does "can't answer" mean in practice? Is it after two failed attempts? Three? After the customer types "I want a human" in all caps?

Without explicit escalation rules, the bot defaults to trying harder: rephrasing the same unhelpful answer, looping customers through the same decision tree, and asking clarifying questions that feel like interrogation. Forrester found that 85% of AI support failures stem from poor knowledge management and a lack of human handoff protocols. The bot fails not because it lacks intelligence, but because nobody told it when to stop trying.

How does incomplete training data sabotage AI performance?

Training data is the second structural failure. Companies assume their help center is complete because it exists, yet help centers are written for questions people expect to ask, not the questions customers actually ask. They cover the happy paths and ignore edge cases. When you train AI on incomplete documentation, you get an agent that confidently gives incomplete answers, and customers cannot distinguish between "the bot does not know" and "the answer does not exist."

Why do system integration gaps create customer frustration?

The third failure is system integration. Customers ask about their specific order, account status, billing issue, or integration setup. If your AI cannot pull real-time data from Shopify, Stripe, Zendesk, or Salesforce, it cannot answer the questions customers care about. It can only tell them to "check your account" or "contact support": exactly what they were trying to avoid. The bot becomes a fancier version of "please hold."

What happens when responsibility boundaries remain unclear?

The fourth failure is responsibility boundaries. If the AI handles first-level questions and humans handle everything else, who is responsible for the gray area in between? Unclear boundaries cause the bot either to escalate too many issues to humans, defeating automation, or to trap customers in loops. Support teams end up spending more time managing the AI than managing tickets directly. What makes these failures dangerous is that they remain invisible until the damage compounds.

Related Reading

- How to Use AI in Customer Service

- How Does AI Improve Customer Service

- How Can Ai Help Customer Service

- AI in Customer Communications For Telecommunications

- How Is Ai Improving Restaurant Customer Service

- AI in Customer Communications in the Insurance Industry

- Ai Agent Challenge In Customer Support

- How Ai Is Changing Customer Service In Telecom

- Ai Appointment Scheduling Healthcare

- Benefits of AI in Customer Support

- Ai Customer Support Implementation Best Practices

- How To Use Ai In Customer Service

The Hidden Costs of Poor AI Customer Support Implementation on CX and Revenue

AI customer service failures cause real damage to customer experience, operations, revenue, and brand trust. These problems worsen over time until fixing them costs more than the initial savings from AI implementation.

🎯 Key Point: Poor AI implementation creates a compounding cost structure where short-term savings turn into long-term losses through damaged customer relationships and operational inefficiencies.

"AI customer service failures don't just impact individual interactions—they create cascading effects that compound over time, making recovery exponentially more expensive than proper initial implementation."

⚠️ Warning: The hidden costs of failed AI customer support often include customer churn, increased support tickets, brand reputation damage, and employee burnout from handling escalated complaints that could have been prevented with proper AI implementation.

The AI customer support cost vs quality trap

Most companies justify AI customer service by pointing to cost reduction: fewer agents, lower overhead, faster resolution. But when the AI customer support cost-versus-quality equation tips the wrong way, the savings disappear. Bad CX could cost companies $3.8 trillion in 2025, according to CX Network, yet most ROI models measure success purely on deflection rates and headcount reduction. A 5% improvement in customer retention can boost profits by 25% to 95%; the opposite holds as well. A support experience that increases churn by even a few percentage points wipes out any cost savings the AI generated. The cost of bad AI customer support is not what you spent on the tool. It is the customer lifetime value you destroyed by deploying it poorly.

The hidden cost of deflection

Deflection (routing customers to self-service instead of human agents) is the metric most AI customer service implementations optimize for. But deflection is not the same as resolution. When 50% of customers report feeling frustrated during chatbot interactions, and 40% of those conversations end poorly, a significant portion of your "deflected" volume represents unresolved demand that churns silently or generates negative word-of-mouth. Seventy-five percent of consumers report frustration with AI customer service, and that frustration follows them to review sites, social media, and competitor comparison pages. The chatbot experience in that first interaction determines whether a customer returns.

The cost of AI customer service trust erosion

Trust is the hardest metric to rebuild. PwC's 2025 CX Survey shows that data privacy is now the top consumer concern when using AI-powered services: 53% of consumers cite it, up 8 points from last year. When customers don't trust your AI with their information, they avoid your support channels or provide minimal context, making the AI less effective. This creates a downward spiral: customers distrust the AI, so they use it less, so it has less information to work with, so it performs worse, so customers trust it even less. This self-reinforcing cycle transforms a fixable chatbot problem into permanent brand damage.

The Implementation Failure Patterns

Why AI chatbot implementations fail differs from why chatbots fail. The first is a technology problem; the second is organizational. Most companies mishandle the organizational aspect, and these automated customer support issues account for the majority of AI customer support failures in production. The most common AI support rollout strategy gone wrong is deploying AI across all channels and customer segments simultaneously without a pilot program, phased rollout, or controlled comparison. The result is predictable: the AI handles simple queries well (password resets, business hours, basic FAQs) but fails on complex interactions that drive the most customer emotion and revenue impact. Without a control group, teams cannot measure the extent of the damage. By the time customer satisfaction scores drop or churn increases, isolating the AI's impact from other variables becomes nearly impossible.

What happens when AI deployment is treated as a one-time project?

A phased AI implementation that stops after deployment is abandoned. The AI needs continuous training on new products, updated policies, and emerging question patterns, along with regular audits of failed conversations and feedback loops from human agents handling escalations. Companies that treat AI deployment as a project with a completion date rather than an ongoing operational capability consistently underperform. This set-and-forget pattern explains why conversational AI fails even when initial deployment shows promising results.

How do wrong success metrics sabotage AI performance?

When the success metric is "percentage of conversations handled without a human," every decision optimizes for deflection. The escalation path becomes harder to find. The bot becomes more aggressive in its attempts to resolve. Customer experience deteriorates in ways the primary metric cannot detect. The right framework measures AI against outcomes that matter: resolution quality, customer effort, follow-up contact rates, and downstream retention. Fixing this measurement problem is the highest leverage move for any team with underperforming AI customer service, because until you measure correctly, you cannot see the failures you need to fix.

The Customer Psychology Nobody Addresses

Bot fatigue is real and worsening. Every customer trapped in a chatbot loop, who typed "talk to a human" multiple times without result or restarted a conversation after the bot forgot context, carries that frustration into future support interactions. The backlash against customer service automation has arrived. Psychology research calls this algorithmic aversion: once a customer has a bad experience with an AI system, they distrust AI systems broadly, not yours alone. They approach your bot expecting failure, which makes them less patient, less willing to share details, and quicker to seek human help or abandon the interaction.

How can emotional intelligence solve AI customer service frustration?

Customer frustration with AI chatbot interactions is not a technical problem but a trust problem. It requires better design: visible escalation paths, honest acknowledgment of limitations, and human handoff without forcing customers to repeat themselves. The missing piece is AI customer service emotional intelligence: the ability to read tone, detect frustration, and adjust behavior in real time. Our conversational AI enables voice-based interactions that detect customer sentiment and escalate appropriately, reducing frustration loops that text-based bots create. Teams using voice AI report shorter resolution times and higher satisfaction because the system interprets vocal cues that text cannot capture.

What makes human-AI hybrid models more effective?

Customers who hate AI support do not hate AI. They hate feeling trapped. The solution is a human-AI hybrid model where AI handles high-volume, repetitive information retrieval tasks and routes everything else to a human with full context. Companies that balance this correctly see better outcomes than pure AI or pure human support alone. This solves the automation backlash by deploying AI with proper escalation design and channel flexibility so customers never feel trapped. These issues are implementation failures, not AI failures, making them preventable with the right design approach.

Related Reading

- Best Ai Customer Service For Hotels

- Patient Support Services Automation

- How To Improve Call Center Customer Service

- Best Ai Tools For Customer Support In Healthcare

- Best Ai Customer Support Tools For It Teams

- Best Voice AI for Restaurants

- It Helpdesk Automation

- How To Use AI for Customer Service Call Center

- Ai Customer Support Automation In Telecom

- How To Use Ai To Improve Customer Service Kpis

Top 10 AI Customer Support Implementation Best Practices That Actually Prevent Failure

Setting up AI customer support that works well means creating systems that stop problems before customers encounter them. The best practices below address the specific points where most setups fail within their first year.

🎯 Key Point: Proactive system design is essential - 78% of AI customer support failures happen because teams focus on deployment instead of prevention strategies.

"Most AI customer support implementations fail within the first 12 months due to inadequate planning and system integration issues." — Customer Service Technology Report, 2024

⚠️ Warning: Companies that skip comprehensive testing phases experience 3x higher failure rates and significantly longer recovery times when issues arise.

1. Audit Your Current Failure Modes

Pull your last 30 days of AI conversations and organize them by: successful resolution rate (confirmed by customer, not system), where customers abandon conversations, top queries the AI fails on, repeat contacts within 48 hours on the same issue, and CSAT gap between AI-handled and human-handled conversations. Most teams discover that 60 to 70% of AI chatbot failures cluster around 5 to 10 specific question types, so targeted fixes resolve the majority of problems. Without this audit, you're guessing at why AI customer support fails for your customers.

2. Fix the Escalation Design

AI chatbot escalation failure is where most implementations break down. The fastest fix for reducing AI support frustration requires deliberate design: make the escalation path visible from the first message and don't hide "talk to a human" behind menu layers. When the bot escalates, transfer the full conversation history so customers don't have to repeat themselves. Set clear triggers for immediate escalation: certain phrases, sentiment drops, or repeated questions.

3. Build for Your Vertical, Not the Generic Case

The AI customer support needs of a Shopify store differ from those of a SaaS product or healthcare organization. Your AI must connect to live systems: order data, product catalogs, and user accounts, not just your knowledge base. Platforms that work well offer native integrations with your existing tools: Shopify, Zendesk, Salesforce, Stripe, and Calendly. Those that don't provide only a chatbot widget, leaving integration work to you. This is where generic implementations fail.

4. Build a Knowledge Layer Designed for RAG

Your AI is only as good as the information it can find and use. Retrieval-augmented generation rewards teams that treat content as operational infrastructure supporting real-time resolution.

How should you structure content for optimal RAG performance?

Good RAG performance starts with training: convert support knowledge, policies, and procedures into machine-readable information. Use modular content (one topic per article), short, clear answers, and an intent-first structure (organize titles around what customers want to do). Consistency improves retrieval accuracy: uniform tone and formatting reduce confusion.

Why do you need more than basic vector search?

A clean, well-organized knowledge base directly improves resolution rate and reduces hallucinations. Add an LLM-based reranker on top of vector search: vector search alone is insufficient for high-stakes support. A reranker evaluates retrieved content and selects the most contextually relevant inputs before generation, improving accuracy and reducing off-topic responses.

5. Train on Real Conversations, Not Just Documentation

Your help center articles show what your team thinks customers ask about. Your actual support tickets show what customers ask about. The gap between those two is where AI customer service failures happen. The strongest AI support implementations train on URLs, PDFs, help articles, past support tickets, internal SOPs, product documentation, and structured Q&A pairs from real conversations. Platforms like Chatbase ingest these sources and apply optimization so the AI delivers information that solves conversations, not merely retrieves it. They treat training as ongoing, adding new data as products evolve and question patterns emerge.

6. Design for Human-in-the-Loop from Day One

The best AI customer service strategies combine AI and humans. AI excels at handling high volumes of requests, processing them quickly, and executing repetitive tasks. Humans excel at decision-making, understanding emotion, and managing unusual situations.

How can seamless escalation improve customer experience?

When AI escalates, the human agent should receive full context: conversation history, inferred intent, collected data, and attempted actions. Asking customers to restate their issue damages trust and eliminates the friction reduction AI was meant to provide.

What happens when AI becomes a co-pilot for agents?

AI as a co-pilot transforms how work gets done. Inside the agent inbox, AI should summarise long message threads, draft responses based on company knowledge, and surface important customer information. This streamlines work and lets people focus on making critical decisions rather than searching for data.

7. Measure What Matters (the AI Customer Support KPIs to Watch)

Stop using vanity metrics and switch to a framework that shows how well things are working. Leading indicators help you catch problems early: conversation abandonment rate, rage-click frequency, repeat contact within 48 hours, escalation request volume, and average number of messages before resolution.

Which lagging and operational indicators should you track?

Lagging indicators confirm the damage: customer satisfaction after AI interactions, likelihood of recommending AI support, customer churn following exposure to AI support, and customer effort required. Operational indicators maintain quality: AI confidence scores, hallucination rates, knowledge gaps, and human agent feedback on escalation handling.

Why do deflection rates miss the actual signal?

Teams that focus on deflection rates or containment percentages miss the real signal. Those metrics measure how many customers are sent to self-service, not whether their problems are solved. The customer who abandons your bot is counted as "deflected," yet their problem remains unresolved.

8. Design the Service Recovery

What happens after an AI chatbot fails matters as much as stopping it from failing in the first place. Service recovery should follow a specific plan. Acknowledge the failure clearly: "I can see the AI was unable to resolve this for you, and I apologise for the extra time that took." Offer a solution that exceeds the customer's original expectations.

Why does proper service recovery increase customer loyalty?

According to Sprinklr, companies that focus on customer experience make 60% higher profits, and handling problems well can increase customer loyalty beyond pre-problem levels. Document what went wrong in your training loop so the AI can learn from it. Turn a breakdown into a moment that builds trust. Customers who receive excellent service after a problem often become more loyal than those who never experienced one.

9. Prioritize Security, Compliance, and Governance

As AI takes on more responsibility, governance becomes a core operating requirement. Policies, escalation rules, and confidence thresholds should be trained, tested, and validated before deployment, then continuously monitored in production. Data protection by default means sensitive fields should be hidden before model processing. Regulated teams require strict data handling controls, including retention limits and vendor assurances. AI should respond only when confidence thresholds are met; when information is missing or unclear, escalation to a human protects both the customer and the business.

10. Be Transparent with Customers About AI Use

AI-powered customer service offers faster responses and personalized support, but raises questions about trust, privacy, and authenticity. Customers want to know who or what they're talking to; transparency about AI's role determines whether the interaction builds or breaks trust. If your chatbot handles first-level support, say: "You are chatting with our AI assistant, who can help with most questions and connect you to a human if needed." This clarifies the interaction and positions AI as a helpful tool rather than a hidden replacement. Among a group of 32 AI experts, 84% agree that companies should disclose the use of AI in their products. Transparency is a trust signal, not merely a courtesy.

How does conversational AI improve voice support?

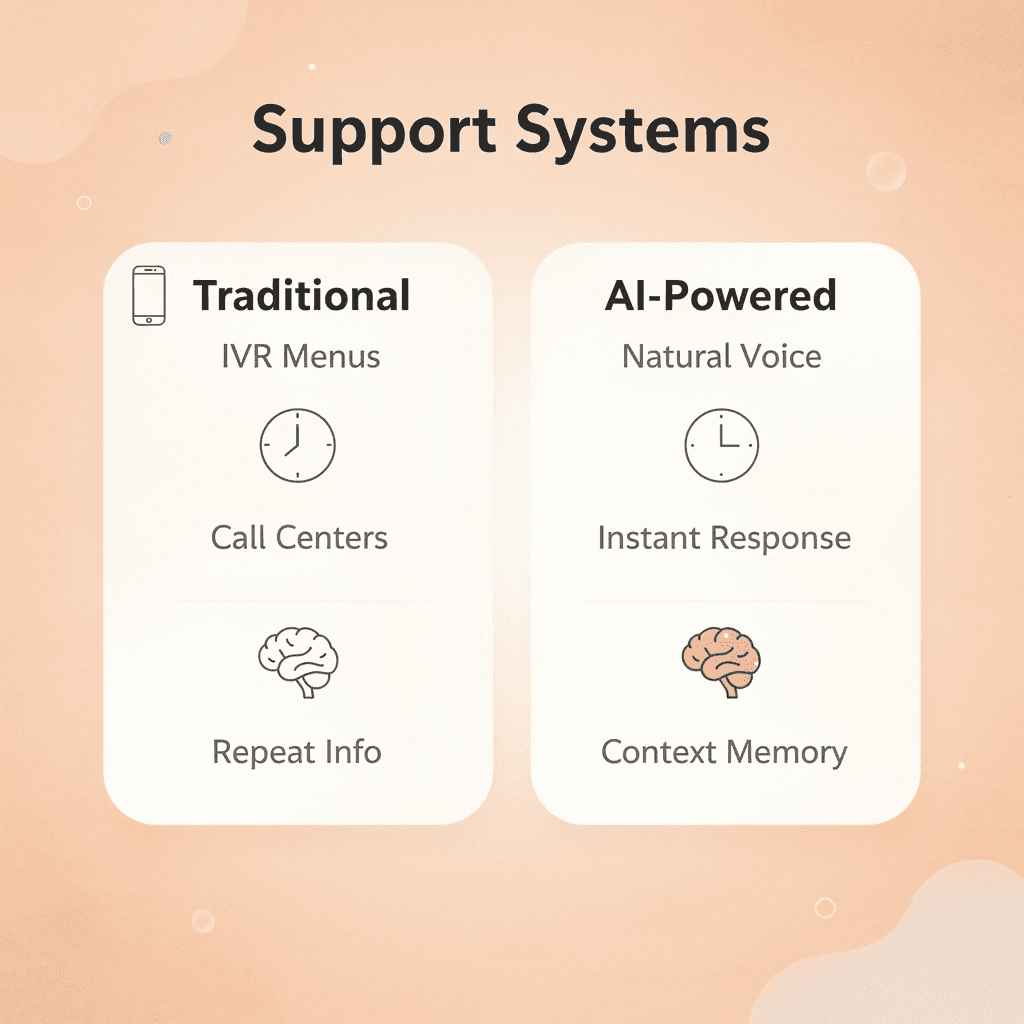

Most teams handle voice support through IVR menus or text-based chatbots, which create problems as call volume increases. Customers abandon calls stuck in menu trees, and text bots cannot handle spoken language nuances or real-time interruptions. Solutions like conversational AI handle inbound and outbound voice interactions with live technology that adapts to customer needs in real time, reducing wait times and improving first-call resolution. Teams can evaluate systems through live demos before deployment, observing how AI responds to actual customer scenarios rather than relying on theoretical claims.

What prevents AI implementation failure?

These ten practices address the failure modes discussed earlier by fixing where systems break down: poor escalation logic, missing integrations, untrained knowledge layers, hidden metrics, and absent recovery protocols. The difference between AI that works and AI that pushes people away depends on whether you designed these mechanisms before launch or discovered they were missing after customers complained. Yet even with all ten practices in place, a deeper structural question remains.

Related Reading

- Best Ai For Insurance Agents

- How Is Ai Helping In The Healthcare Industry

- Relevance Ai Alternative

- Best Ai Tools For Insurance Customer Service

- Lindy Ai Alternative

- Lindy Ai Vs Zapier

- Best Ai Voice Agents For Insurance

- Botpress Alternative

AI Customer Support Only Works When It Is Built Into a Real Conversation System

The deeper structural question is: are you building a conversation system or automating responses? Most AI implementations fail because they treat customer interactions as isolated tickets rather than as continuous dialogues. Real conversation requires memory, cross-channel context transfer, and the ability to adapt tone and depth to what the customer needs. When AI is bolted onto rigid workflows, it becomes another layer of friction instead of a resolution tool.

🎯 Key Point: Successful AI implementations treat interactions as ongoing conversations rather than isolated incidents.

Correctly implemented AI handles live conversations across voice, chat, and email while maintaining continuity. It knows when a customer called three days ago about a billing issue and references that history without forcing them to repeat themselves. It escalates naturally when complexity exceeds its trained scope, passing full context to human agents instead of creating handoff gaps.

"Real conversation requires memory, context transfer across channels, and the ability to adapt tone and depth based on what the customer actually needs."

Most enterprises rely on outdated call centers or rigid IVR trees that force customers through numbered menus before reaching help. Conversational AI deploys real-time voice agents that handle customer calls instantly, respond naturally to questions, and integrate directly into enterprise support systems while maintaining full control over data and compliance.

💡 Tip: Look for AI systems that handle interruptions and context switches seamlessly—this is where most implementations fail.

The difference shows up immediately in demo environments. You can watch how the system handles edge cases, manages interruptions, transfers context during escalations, and adapts responses based on sentiment shifts. Book a demo today to see how your actual customer calls would be handled.

Traditional Support vs Conversational AI

- Interaction style

- Traditional support: Rigid menu systems

- Conversational AI: Natural language conversations

- Context handling

- Traditional support: No conversation memory

- Conversational AI: Full context retention

- Escalations

- Traditional support: Manual handoffs

- Conversational AI: Intelligent escalations

- Channel experience

- Traditional support: Siloed channels

- Conversational AI: Unified conversation flow