Banking call centers handle thousands of daily inquiries about account balances, transaction disputes, loan applications, and password resets while support costs climb each quarter. Banks must manage high-volume customer interactions without sacrificing security or service quality, all while maintaining lean operations. Smart automation can transform these operations by handling routine queries faster and more accurately than traditional methods.

Intelligent systems work around the clock to resolve common banking questions, authenticate customers securely, and escalate complex issues to human agents only when necessary. This technology understands context, adheres to financial regulations, and delivers personalized responses that make customers feel heard. Banks can redirect resources toward higher-value interactions while dramatically reducing wait times and operational expenses through conversational AI.

Summary

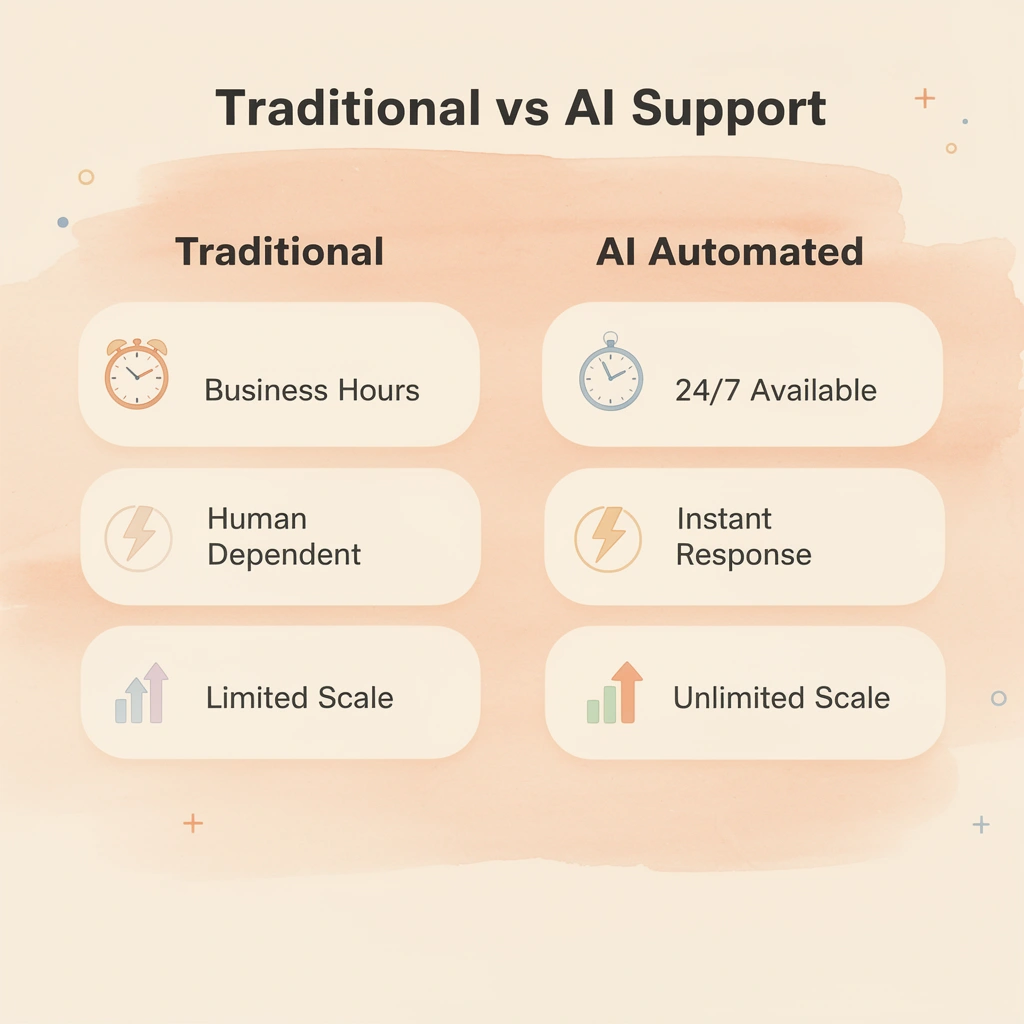

- Traditional call centers force banks to choose between speed, accuracy, and compliance because manual support systems cannot deliver all three simultaneously. When institutions hire enough agents to eliminate wait times, labor costs spiral out of control. When they cut staff to control expenses, service quality collapses, and compliance errors slip through as overworked teams rush through interactions. This structural tension exists because these systems were designed before omnichannel expectations and 24/7 availability became standard customer requirements.

- Banking customers expect 24/7 support availability, yet traditional call centers cost $5 to $15 per customer interaction, according to Financial Services Analysis. These expenses compound when customers abandon interactions mid-call or require multiple contacts to resolve simple issues, such as balance checks and password resets. The cost structure becomes unsustainable as transaction volumes grow, creating bottlenecks that delay fraud alerts and stretch account reviews across weeks.

- Banks that implement AI strategically achieve a 15-percentage-point improvement in the efficiency ratio, according to PwC's 2025 analysis, but the gains come from better risk management and customer retention rather than just lower labor costs. The question is not how many agents can be eliminated, but which interactions pose the greatest risk when handled inconsistently, and how to standardize them without degrading the experience. Cost reduction is a byproduct of better process control, not the primary objective.

- Poor AI implementations create compliance exposure that costs more than the agents they replaced. A chatbot that misunderstands a fraud claim and delays the investigation creates regulatory risk, while automated systems that provide incorrect account information violate consumer protection standards. Even systems achieving 90% accuracy in fraud detection still generate false positives requiring human review, escalation protocols, and potential customer compensation, according to LinkedIn research on AI in banking.

- Black-box automation fails audits because regulators do not accept algorithmic decisions without traceable logic that maps to compliance frameworks and internal policies. When a voice system denies a transaction or freezes an account, someone must explain why using reasoning that satisfies regulatory requirements. Teams often discover this gap during their first serious audit when they cannot justify classification decisions to regulators.

- Conversational AI handles authentication, transaction inquiries, and compliance-sensitive requests through voice interfaces that integrate with existing banking systems while maintaining audit trails that satisfy regulatory requirements.

What Is AI Customer Support Automation in Banking and Why Traditional Support Models Are Failing

AI customer support automation in banking uses conversational AI, natural language processing, and machine learning to handle customer interactions across chat, voice, and digital channels. These systems verify customer identity, process routine transactions, answer questions about rules and regulations, and escalate complex cases to human agents when necessary. The technology understands customer intent, follows all banking rules, and delivers personalized responses that make customers feel heard rather than like they are interacting with a machine.

🎯 Key Point: AI customer support automation transforms traditional banking support by combining intelligent technology with regulatory compliance to deliver 24/7 service that feels human while maintaining the security standards banks require.

"AI customer support automation uses conversational AI, natural language processing, and machine learning to handle customer interactions across multiple channels while maintaining regulatory compliance and personalized service."

💡 Example: When a customer asks about their account balance at 2 AM, AI automation can instantly verify their identity, access their account information, provide the real-time balance, and even offer personalized insights about recent transactions—all without requiring a human agent to be available.

What causes the mismatch between customer expectations and bank capacity?

Long wait times in banking support stem from a mismatch between customer expectations and operational capacity. Banking Industry Research reports that 70% of banking customers expect 24/7 support availability, yet most institutions operate contact centers designed for business hours and predictable volumes.

Balance checks, password resets, transaction disputes, and account status inquiries flood support lines at all hours. These repetitive, high-volume requests tie up agents who could handle fraud investigations or loan consultations.

How do rising call volumes impact banking costs and customer satisfaction?

Financial Services Analysis shows traditional call centers cost banks $5-15 per customer interaction, with costs escalating when customers disconnect or require multiple calls to resolve simple issues.

When a customer waits 20 minutes to check their balance, both sides lose: the bank pays for the customer's hold time and the agent's labor, while the customer loses trust in the bank.

Why can't traditional systems balance speed, accuracy, and compliance?

Banks face a core tension: customers want instant responses, regulators require perfect accuracy, and executives need cost efficiency. Manual support systems force institutions to choose which priority to sacrifice. Hiring enough agents to eliminate wait times increases labor costs. Cutting staff to control expenses degrades service quality. Attempting to maintain both allows compliance errors to slip through when overworked teams rush interactions.

How do disconnected channels damage customer trust?

When a customer receives different responses across channels, it creates significant problems. A customer might chat, call, and email, but each conversation feels isolated. The chat agent cannot see phone call notes. The email response contradicts what the phone agent promised. The customer repeats their story three times, and their trust in the company decreases with each repetition. This is a structural limitation of systems built before customers expected to use multiple channels.

How does AI automation transform banking interactions?

AI customer support automation transforms how banks interact with customers by automating repetitive tasks that human agents handle. Conversational AI manages inquiries about balance, transaction histories, branch locations, and basic account questions with 91% accuracy, operating continuously without fatigue or error. These systems learn from each interaction, improving their responses over time while maintaining full compliance with financial regulations.

The old way of having human agents handle every customer contact creates problems as transaction volumes grow and customers demand immediate answers. This slows fraud alerts, extends account reviews to weeks, and leaves customers without access to funds during critical times. Our conversational AI solutions handle routine authentication and inquiry workflows through natural-language voice interactions, reducing resolution times from hours to seconds while freeing compliance teams to focus on cases requiring human judgment.

What makes voice AI agents different from chatbots?

Voice AI agents go beyond text-based chatbots, enabling customers to complete transactions via voice commands with accuracy matching that of human agents, without menu trees or information repetition across channels. These systems integrate with existing phone infrastructure, transforming legacy IVR systems into smart conversational interfaces that understand context and maintain continuity across every touchpoint.

But automation alone doesn't solve the deeper problem that most banks haven't yet acknowledged.

Related Reading

- How to Use AI in Customer Service

- How Does AI Improve Customer Service

- How Can Ai Help Customer Service

- AI in Customer Communications For Telecommunications

- How Is Ai Improving Restaurant Customer Service

- AI in Customer Communications in the Insurance Industry

- Ai Agent Challenge In Customer Support

- How Ai Is Changing Customer Service In Telecom

- Ai Appointment Scheduling Healthcare

- Benefits of AI in Customer Support

- Ai Customer Support Implementation Best Practices

- How To Use Ai In Customer Service

Why AI Automation in Banking Support Is Not Just Chatbots or Cost Reduction

Most banks treat AI automation as a way to cut costs: replace agents with bots, reduce headcount, and watch savings grow. But that approach overlooks the real problem automation must solve—controlling risk while maintaining service quality for more customers. Automating solely for cost reduction creates problems that exceed the savings from fewer agents.

🎯 Key Point: True AI automation in banking support isn't about replacing human agents—it's about enhancing capabilities while maintaining regulatory compliance and customer satisfaction.

"Banks that focus solely on cost reduction through automation often see a 15-20% increase in customer complaints and regulatory issues within the first year." — Banking Technology Research, 2024

⚠️ Warning: Cost-cutting automation without proper risk management can lead to compliance failures, customer churn, and reputation damage that far exceeds any short-term savings.

What compliance risks do poor implementations create?

Poor implementations create compliance exposure. A chatbot that misunderstands a fraud claim and delays the investigation creates regulatory risk, while an automated system that provides incorrect account information violates consumer protection standards.

According to LinkedIn research on AI in banking, systems achieving 90% accuracy in fraud detection still generate false positives requiring human review, escalation protocols, and potential customer compensation. The 10% failure rate doesn't disappear through automation; it becomes harder to catch and more expensive to fix.

How do keyword-matching systems create operational problems?

Banks using keyword-matching systems trigger alerts whenever the words "fraud" or "dispute" appear, flooding compliance teams with false positives. The automation shifted work to higher-cost departments without providing the context needed to resolve issues efficiently.

Customers repeat the same problems across three channels because the bot cannot access account history, the phone agent cannot see the chat transcript, and the compliance team works in a different system entirely.

How does intent understanding differ from keyword matching?

Good banking automation starts with understanding what customers want, not merely the words they use. The system must distinguish between "I want to dispute this charge" and "I have a question about how disputes work," as these require different resolution paths.

Context awareness means the AI knows the account status, transaction history, past interactions, and regulatory holds before responding. It can recognise whether a customer checking on a pending transfer is verifying the status, reporting an error, or recovering from fraud.

What makes secure data access essential for banking automation?

Secure data access separates functional automation from liability generators. The AI needs real-time account information with proper authentication, not generic responses that force customers to verify details the bank already has.

Access must include safeguards: which information can be shared over which channels, when human verification is required, and how to handle requests that trigger compliance reviews. Decision routing logic determines when AI resolves independently versus escalating to specialists, accounting for regulatory requirements rather than efficiency alone.

How do modern solutions handle compliance and integration?

Tools like conversational AI handle login verification, transaction questions, and rule-based requests through voice systems integrated with current banking platforms. Our conversational AI solution helps banks automate these routine interactions while maintaining compliance with financial regulations.

Teams report solving problems without needing to ask for help for everyday requests while maintaining regulatory compliance. Automation handles straightforward interactions, freeing human workers to focus on cases requiring critical thinking, investigation, or relationship-building.

How does the implementation of strategic AI change banking efficiency?

According to PwC's analysis of AI in banking, banks that deploy AI strategically achieve a 15-percentage-point improvement in their efficiency ratio through enhanced risk management and customer satisfaction, not workforce reduction alone.

Automation in banking is about controlling risk while growing service. The real question isn't "how many workers can we get rid of?" It's "which customer interactions create the most risk when handled inconsistently, and how do we standardise those interactions without diminishing customer satisfaction?"

Why does focusing on risk reduction outperform cost-cutting automation?

Costs decrease as a side effect of better process control. Automation reduces risk by eliminating repetitive work, shortening resolution times, and improving consistency.

When you automate solely to cut costs, you create coverage gaps, increase escalations, and build systems requiring constant human oversight due to insufficient context and routing logic.

Well-designed automation fails if it cannot connect with the systems where customer data lives.

How AI Customer Support Automation Works in Banking Systems and Where It Actually Delivers Results

Voice AI systems in banking work through a step-by-step decision process: customer input arrives through voice or chat, natural language processing figures out what the customer actually wants, an authentication layer checks their identity, a decision engine determines whether to handle it right away or pass it to a human agent, and action execution finishes the task. If intent detection misreads "I need my balance" as a fraud claim, everything that comes after breaks.

🎯 Key Point: The entire AI workflow depends on accurate intent detection in the first step - one misinterpretation can derail the entire customer interaction.

AI Process Step

Customer Input

- Voice/chat capture

- No data to process

NLP Processing

- Intent recognition

- Wrong response path

Authentication

- Identity verification

- Security breach risk

Decision Engine

- Route determination

- Inefficient handling

Action Execution

- Task completion

- Customer frustration

"Intent detection accuracy is the foundation of successful AI banking interactions - when this fails, customer satisfaction drops significantly." — Banking AI Implementation Study, 2024

⚠️ Warning: Even advanced AI systems can misinterpret customer requests, which is why most banks maintain human oversight for complex transactions and sensitive account operations.

What determines whether AI automation succeeds or fails?

The critical difference between effective and broken automation lies in where you draw the boundary between machine and human judgment. AI handles repetition with precision: answering "What's my balance?" thousands of times daily, retrieving transaction history in seconds, and processing routine transfers following exact rules.

It cannot read emotional subtext, evaluate financial hardship appeals, or make judgment calls on disputed charges where context determines fairness. When banks deploy AI without clear handoff rules, customers get trapped in loops where the system confidently provides wrong answers because it lacks the logic to recognise its own limits.

How does AI handle routine banking inquiries?

Checking balances, requesting transaction history, and inquiring about interest rates follow patterns that conversational AI handles faster than human agents. The system verifies the caller's identity, retrieves database information, and provides answers in under 30 seconds. According to AI in Banking 2025: Smarter Customer Service & Collections, this automation enables 24/7 support availability without staffing night shifts or weekends.

AI doesn't mishear account numbers, forget verification steps, or change its tone based on call volume.

What happens when inquiries become complex?

A routine only stays routine when customer needs remain within system boundaries. The moment someone asks, "Why was I charged twice?" instead of "Show me my charges," the decision engine must recognise it has entered dispute territory and route to a human.

Many implementations fail here because they optimize for containment rate rather than accuracy, pushing AI to attempt resolution beyond its capability.

How do automated payment systems handle standard transactions?

Fund transfers work well with automation when they follow standard patterns: verified payee, sufficient balance, and no compliance flags. The voice AI guides customers through amount confirmation, account verification, and security checks. Speed improves, error rates drop, and customers complete transfers without waiting in line.

What happens when AI compliance systems create processing bottlenecks?

The system breaks when transfers trigger anti-money-laundering reviews or involve new payees who require additional verification. I've watched AI-driven compliance systems create what one user described as "death loops": a simple business-to-personal transfer freezes an account indefinitely.

The decision engine flags the transaction but provides no transparent pathway for resolution. The customer receives automated messages promising a review within days, yet actual processing stalls because no human can override the AI's risk assessment. This isn't AI replacing human judgment; it's AI blocking access to human judgment entirely.

How does AI accelerate loan processing workflows?

Credit score checks, income verification, and preliminary approval decisions happen in minutes instead of days when AI handles the processing workflow. The system ingests application data, checks credit bureaus, applies underwriting rules, and returns a conditional decision. Automation eliminates waiting time for straightforward applications where income, credit, and debt ratios fall within defined parameters.

What are the limitations of automated underwriting?

Complex applications expose AI's limits. Self-employed income, recent credit events requiring explanation, or unusual collateral situations demand human underwriters who can weigh factors beyond the algorithm's training. AI replaces repetitive data gathering and rules-based screening, but cannot replace the judgment needed when applicants fall outside standard risk models.

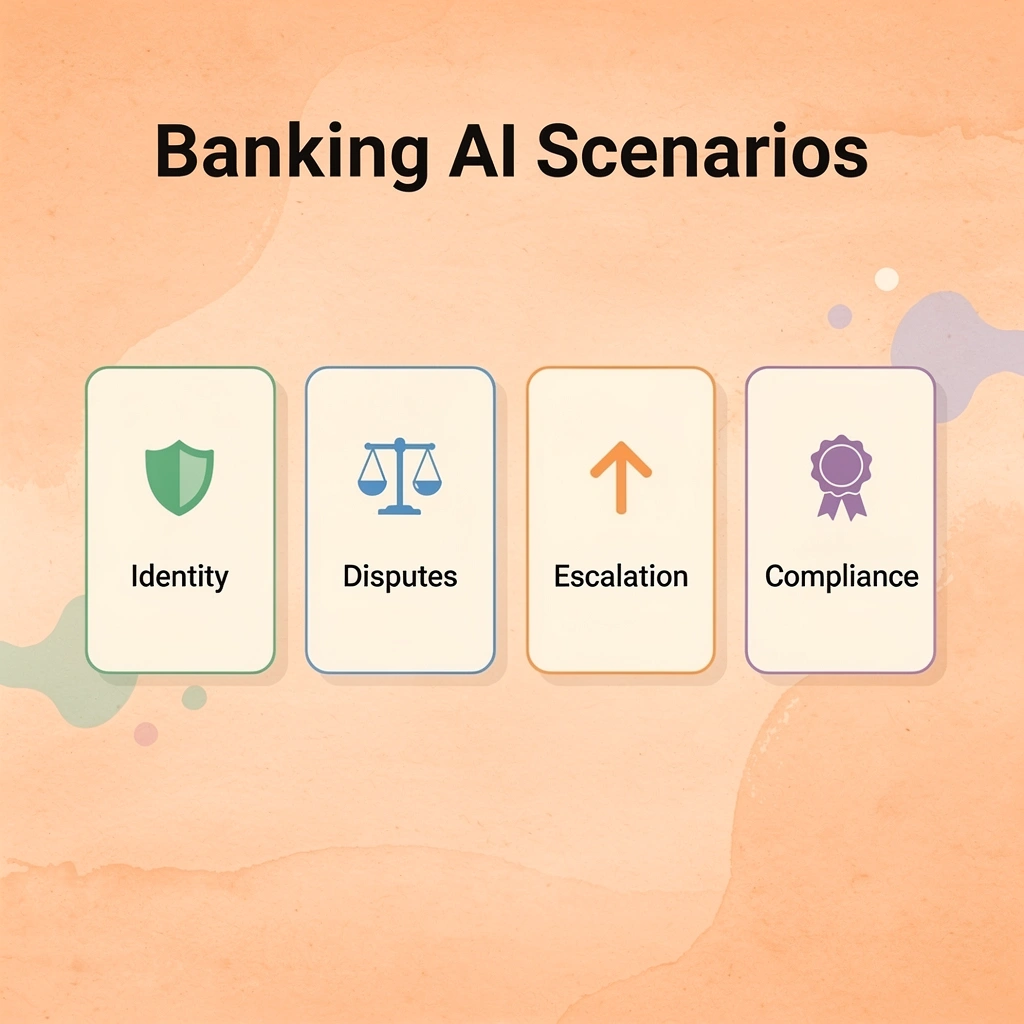

What types of issues require mandatory human intervention?

Fraud disputes, account restrictions, and emotionally charged complaints require skills that AI lacks. When a customer calls about unauthorized charges, they need someone who can evaluate their explanation, cross-reference behavior patterns the system might have missed, and make a trust-based decision about provisional credit.

When someone's account gets frozen, and they can't access rent money, they need a human who can recognise urgency and escalate appropriately, not a chatbot offering scripted empathy while the decision engine processes in silence.

Why do production environments expose AI limitations?

Demos show AI handling clean, contained requests with obvious intent and yes-or-no answers. Real-world use involves angry customers, incomplete information, and situations where the right answer depends on context that the system cannot access.

Most banks treat escalation as a failure metric rather than a design feature, which guarantees their AI will attempt to resolve cases it should route immediately. But knowing when AI works and when it fails means nothing if you can't implement it without creating new risks.

Related Reading

- Best Voice Ai For Restaurants

- How To Use Ai For Customer Service Call Center

- Patient Support Services Automation

- How To Use Ai To Improve Customer Service Kpis

- Best Ai Customer Service For Hotels

- Ai Customer Support Automation In Telecom

- It Helpdesk Automation

- How To Improve Call Center Customer Service

- Best Ai Tools For Customer Support In Healthcare

- Best Ai Customer Support Tools For It Teams

How to Implement AI Customer Support Automation in Banking Safely

What types of banking tasks work best with voice AI?

Voice AI works when the problem is volume, not variability. Balance inquiries, transaction history, and payment scheduling follow predictable patterns with binary outcomes. According to PwC's 2025 analysis, banks using AI in high-volume, low-complexity interactions achieved a 15-percentage-point improvement in efficiency ratios.

This gain disappears when using AI for decisions where context determines the right answer, such as disputed charges that depend on merchant relationships or account restrictions tied to legal holds that the system cannot access.

How does backend integration affect AI performance?

The critical difference is backend integration. AI cannot access what your core banking platform does not expose. If authentication, account status, and transaction data do not flow cleanly into the voice system, automation breaks down.

Failures typically stem from data latency, when account updates lag behind customer expectations, or access controls, when the AI shares information over unverified channels. Strong implementation ensures the voice layer functions as an interface, not an independent decision engine.

How does black-box automation fail regulatory audits?

Black-box automation fails audits because regulators don't accept "the AI flagged it" as justification. When a voice system denies a transaction or freezes an account, someone must explain why using logic that maps to compliance frameworks and internal policies.

If the decision pathway isn't traceable, you've traded operational efficiency for regulatory exposure. Teams often discover this gap during their first serious audit, when they cannot justify classification decisions to regulators.

What data privacy violations occur with AI systems?

Data privacy violations occur when AI systems access customer information beyond what's needed for the immediate task. A voice agent verifying identity doesn't need full transaction history, yet poorly scoped permissions often grant it access anyway.

Automation violates compliance when it applies policies without recognizing exceptions, such as hardship accommodations or fraud victim protections that require human judgment. Platforms like conversational AI address this by enforcing strict access controls and escalation thresholds, ensuring that the system requests only the required data and routes sensitive cases to human agents before taking action.

How do hybrid models balance automation with human oversight?

The goal is controlled automation with human fallback. AI handles authentication, retrieval, and routine transactions, while humans handle disputes, disclosures of financial hardship, and requests involving emotional context or regulatory grey areas. The boundary must be clear, not probabilistic. If the system encounters language indicating distress, confusion about fees, or mention of fraud, it escalates immediately without attempting resolution.

How should escalation thresholds be calibrated for optimal performance?

Escalation thresholds work like circuit breakers. Set them too high, and the AI will attempt cases it's not ready for. Set them too low, and human agents get overwhelmed with false alarms. The key is to monitor which interactions the AI completes successfully and which ones it escalates to humans, then adjust the triggers based on those results.

Customer language changes, fraud methods advance, and regulatory expectations shift over time. Review decision logs and escalation rates weekly to maintain risk control. However, this only works if you can see what the system does when real customers use it, not just in controlled testing.

Related Reading

- Lindy Ai Alternative

- Lindy Ai Vs Zapier

- Relevance Ai Alternative

- How Is Ai Helping In The Healthcare Industry

- Best Ai Tools For Insurance Customer Service

- Best Ai For Insurance Agents

- Best Ai Voice Agents For Insurance

- Botpress Alternative

See How AI Customer Support Automation Handles Real Banking Conversations Before You Deploy It

Trusting AI customer support automation in production requires observing how it handles unexpected requests, forgotten security answers, transaction disputes outside standard scripts, and situations requiring regulatory escalation. Documentation and vendor promises fall short when real customer interactions and compliance standards are at stake.

🎯 Key Point: Bland lets you test conversational AI in controlled banking scenarios before deployment. Our conversational AI helps you watch how the system handles identity verification, routes account inquiries, manages escalation logic, and responds to questions outside expected patterns. You'll see exactly where human handoff occurs, how it maintains compliance boundaries, and whether it distinguishes between routine balance checks and fraud alerts requiring immediate attention.

"Most financial institutions struggle not with adopting AI automation, but with trusting it when money, compliance, and customer relationships are at stake." — Banking Technology Research, 2024

AI Testing Scenario

Identity Verification

- Authentication flow accuracy

- High - Security breach risk

Transaction Disputes

- Escalation trigger timing

- Critical - Regulatory compliance

Account Inquiries

- Response accuracy & routing

- Medium - Customer satisfaction

Fraud Alerts

- Immediate escalation protocols

- Critical - Financial liability

Most financial institutions struggle not with adopting AI automation, but with trusting it when money, compliance, and customer relationships are at stake. Can it authenticate users without friction? Route sensitive requests safely? Escalate when financial or regulatory risk surfaces? These questions determine whether automation reduces operational cost or creates liability.

⚠️ Warning: Book a demo to walk through real interaction scenarios, see how the system handles authentication and escalation triggers, and observe how it integrates into secure, compliant workflows. You'll validate performance under conditions that matter to your institution, not just ideal test cases.