Call centers evaluating AI solutions often compare IBM Watson with ChatGPT, two platforms with fundamentally different approaches to customer service automation. Watson brings enterprise-grade infrastructure and industry-specific training, while ChatGPT offers advanced natural language capabilities and broader conversational flexibility. Both promise to reduce response times and improve customer satisfaction, but their performance varies significantly in real call center environments. Understanding these differences helps businesses choose the right solution for their specific support operations.

The decision between established enterprise platforms and modern language models ultimately depends on implementation quality and practical performance metrics. Success requires more than just selecting the right technology—it demands AI systems that reliably handle real customer conversations and scale as support demands grow. For businesses seeking proven conversational AI solutions, Bland offers conversational AI phone agents designed specifically for customer support operations.

Summary

- The typical call center AI stack promises efficiency but delivers a new maintenance burden that nobody anticipated. Three to six months after launch, performance metrics slide as the AI that successfully handled 60% of queries in month one drops to 45% by month four because nobody updated it when policies changed. The hidden cost isn't the license fee; it's the ongoing engineering time required to prevent your smart system from becoming progressively dumber as business operations evolve.

- Watson became the default enterprise choice not because it offered the most advanced technology, but because it carried IBM's century-old reputation for reliability when procurement teams needed to justify AI spending to executives in 2016. The decision felt less like innovation and more like insurance, a way to say "we're doing AI" without the career risk of betting on an unproven startup. Watson's integration with existing CRM platforms like Salesforce and ServiceNow mattered more than accuracy improvements because call centers operate on thin margins and rely on legacy systems that cost millions to implement.

- Customer service interactions have fundamentally changed since Watson's architecture was designed for rule-based queries in 2015. According to Gartner's 2023 Customer Service and Support Leader Poll, 58% of service interactions now involve complex, multi-issue inquiries that require contextual understanding across multiple systems. Callers today switch topics mid-conversation, reference previous interactions across channels, and expect systems to understand context without repetition, exposing the limitations of structured decision-tree approaches.

- The philosophical split between Watson and ChatGPT creates completely different user experiences when frustrated customers call about billing errors. Watson asks, "what's the approved response?" while ChatGPT asks, "what makes sense here," prioritizing control and governance versus conversational flexibility. This difference determines what breaks when real customers arrive with real problems: Watson excels at structured workflows in regulated environments, while ChatGPT handles the messy reality of topic switches, interruptions, and emotional nuance.

- American Express found that 67% of customers have hung up out of frustration when they couldn't reach a representative. The critical difference between these AI systems lies in how they handle failure: ChatGPT often recovers gracefully, using conversational context to clarify misunderstandings, while Watson typically escalates to human agents because its architecture wasn't designed for improvisational dialogue. That recovery capability determines whether your AI reduces call volume or just adds steps before reaching the same outcome.

- Conversational AI addresses this by deploying voice agents that handle live calls in real time, processing speech as it happens and adjusting tone as conversations unfold, without requiring the rigid scripting of Watson or the text-based limitations of ChatGPT.

Why Call Centers Are Struggling With AI Systems Like IBM Watson Today

Why do AI systems struggle with real customer conversations?

Call centers invested in AI systems expecting faster answers and lower costs, but gained a new layer of complexity that slows operations. The problem isn't what the AI can do: most setups can't handle the messy, emotional, exception-heavy reality of actual customer conversations.

How does the context recognition gap affect customer trust?

According to Salesforce, 73% of customers expect companies to understand their unique needs and expectations. Yet AI tools frequently fail to recognize context and respond with nuance.

When a frustrated caller explains a billing error for the third time, the system treats it as a fresh question. When someone's voice tightens with urgency, the bot continues its scripted path. This disconnect erodes customer loyalty.

Why do sophisticated AI systems struggle with real customer scenarios?

The typical call center AI stack looks impressive on paper: natural language processing, sentiment analysis, predictive routing. Then reality arrives. A customer calls about a refund for a canceled subscription tied to a billing dispute from two months ago. The AI, trained on clean data and simple scenarios, starts guessing—suggesting articles, offering to reset passwords, and asking the caller to repeat information already in the CRM.

What happens when agents must constantly override AI recommendations?

Agents override the AI's suggestions manually because the system can't connect the dots across departments or recognise when a situation requires judgment rather than pattern matching. Agents spend more time correcting AI mistakes than handling calls the old way. American Express found that 67% of customers have hung up out of frustration when they couldn't reach a representative. When your AI creates more of these moments instead of fewer, you've automated abandonment, not support.

What makes AI maintenance so demanding?

Implementation is expensive, but maintenance never ends. Every product update, policy change, or seasonal promotion requires retraining. Every new edge case the AI encounters needs review. Every accuracy drop demands investigation. Teams assumed occasional tuning would suffice. Instead, they gained a second full-time job keeping the AI current enough to avoid embarrassing failures.

When do organizations realize the true cost of maintenance?

Most organizations discover this three to six months after launch, when performance metrics drop and customer complaints increase. The AI that handled 60% of questions successfully in month one now handles 45% in month four because nobody updated it when the return policy changed.

Solutions like conversational AI address this by building voice agents that adapt to business changes without constant retraining, maintaining consistent performance as operations evolve. The hidden cost isn't the license fee: it's the ongoing engineering time needed to prevent your smart system from degrading.

Why do legacy systems resist AI integration?

Legacy systems weren't built for AI integration. They were constructed when "API" wasn't yet common and data lived in separate departmental silos. Connecting modern AI to these systems requires custom middleware, data transformation layers, and workarounds that increase the number of failure points.

One outdated endpoint can break the entire chain. One mismatched data format can cause the AI to hallucinate confident but meaningless responses.

What happens when AI meets incompatible infrastructure?

The organizations struggling most tried to add new AI to old infrastructure without addressing fundamental incompatibility. When your AI needs real-time customer history but your database updates overnight, you've created a system that is confidently wrong for 23 hours daily.

When your voice AI needs clean audio but your phone system compresses everything to save bandwidth, accuracy drops before the conversation starts. These aren't problems better prompts or training data solve—they're architectural mismatches requiring rethinking how systems connect.

This is where most call centers realize their AI stack isn't solving the problem they purchased it for.

Related Reading

- Conversational Ai Examples

- Conversational Ai Architecture

- How To Deploy Conversational Ai

- Types Of Ai Chatbots

- How To Build A Conversational Ai

- How To Improve Response Time to Customers

- Conversational Ai Future

- Conversational AI Pricing

- Customer Service ROI

- Generative Ai Vs Conversational Ai

- Conversational Ai In Ecommerce

Why IBM Watson Became the Default Choice for Call Centers

Most call centers chose IBM Watson because it was the safest enterprise AI option. When procurement teams needed to justify AI spending to executives in 2016, Watson offered IBM's long history of reliable business solutions. No one got fired for choosing Big Blue.

🎯 Key Point: IBM Watson became the default choice not because it was the best AI solution, but because it carried the lowest risk for decision-makers who needed to justify enterprise-level investments.

"No one got fired for choosing Big Blue – this became the guiding principle for call center procurement decisions in the early days of AI adoption." — Enterprise AI Decision Making, 2016-2018

💡 Tip: When evaluating AI solutions for your call center, consider both technical capabilities and vendor reliability – the safest choice isn't always the most innovative one.

The enterprise safety blanket

IBM built dominance on trust earned over decades of selling to cautious IT departments. When Watson won Jeopardy in 2011, it provided enterprise buyers with a compelling narrative: AI that defeated humans on national television, backed by a company already embedded in their systems.

IBM positioned as a Leader in the 2025 IDC MarketScape for Worldwide General-Purpose Conversational AI Platforms reinforced this view. The technology didn't need to be the most advanced; it needed to be something leaders could defend in budget meetings and explain to boards that remembered IBM as the company known for mainframes and reliability.

When structured workflows matched predictable problems

Watson excelled at automating repetitive, rule-based interactions, such as password resets, account balance inquiries, and appointment scheduling. These weren't conversations requiring careful thought—they were decision trees dressed up as dialogue. Watson's strength lay in understanding structured questions and delivering predetermined responses, precisely what early call center automation needed.

The architecture fit the moment perfectly. Most customer service interactions followed predictable patterns: callers asked similar questions, agents followed scripts, and knowledge bases organized information in a hierarchical manner. Watson could ingest those scripts, map those hierarchies, and handle first-level queries without human intervention.

Contact centers have experienced a fundamental change driven by artificial intelligence (AI) and automation, technologies that improve operations, enhance customer experience, and reduce operational costs, with Watson positioned at the center of that shift.

Why did integration matter more than AI capabilities?

Watson didn't arrive alone. It came bundled with IBM's existing relationships with Salesforce, ServiceNow, and every major CRM platform that call centers already use. Integration wasn't a feature; it was the entire value proposition. While competitors promised better AI, IBM promised AI that worked with your current systems without replacing infrastructure that cost millions to implement.

How does seamless integration benefit modern call centers?

This mattered more than improvements in accuracy or conversational sophistication. Call centers operate on thin margins with legacy systems held together by custom integrations and institutional knowledge. Watson offered a path to AI that didn't require starting over. Teams using our conversational AI platform benefit from similar integration advantages, connecting voice agents directly to existing CRM and telephony infrastructure without requiring complete system overhauls.

Why couldn't Watson adapt to changing customer expectations?

Watson was built for a customer service world that no longer exists. Callers today don't follow scripts: they switch topics mid-conversation, reference previous interactions across channels, and expect systems to understand context without repetition. The rule-based foundation that made Watson reliable in 2015 became its limitation by 2020.

Customer expectations evolved as conversational AI improved. People expected systems to handle interruptions, recognise urgency, and adapt to emotional cues. Watson's architecture, optimized for structured queries and predetermined paths, struggled with the messy reality of actual human conversation. The technology that won Jeopardy by ingesting encyclopedias couldn't keep pace with customers who expected it to remember their last three calls and understand their frustration.

What happens when enterprise legacy meets modern technology?

But understanding why Watson became the default choice reveals only half the story. The real question is what happens when you compare that enterprise legacy against technology built for a different purpose.

Related Reading

- Conversational AI for Customer Service

- Conversational AI for Sales

- Conversational AI Lead Scoring

- Conversational Ai Leaders

- Conversational AI in Telecom

- Conversational AI in Financial Services

- Benefits Of Conversational Ai

- Best-rated voice assistants for conversational AI

IBM Watson vs ChatGPT Detailed Comparison in a Call Center Environment

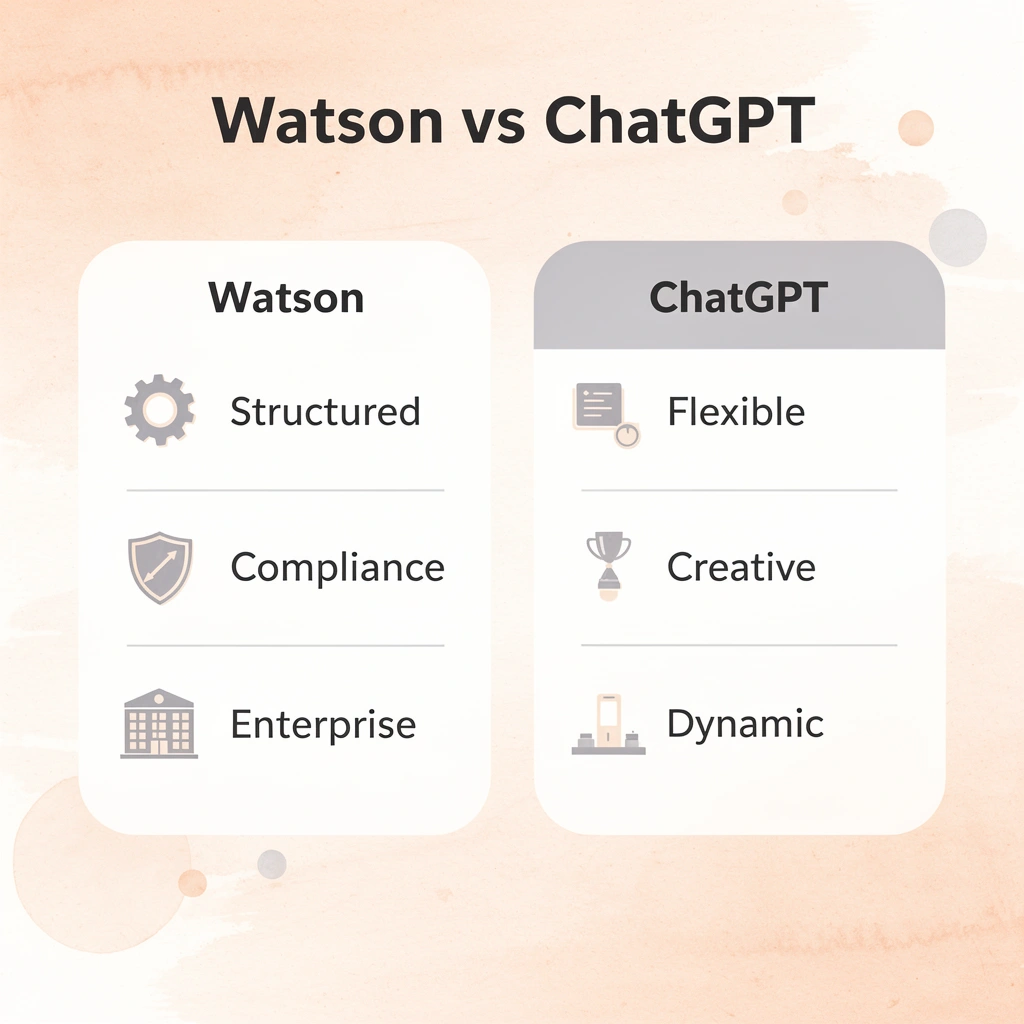

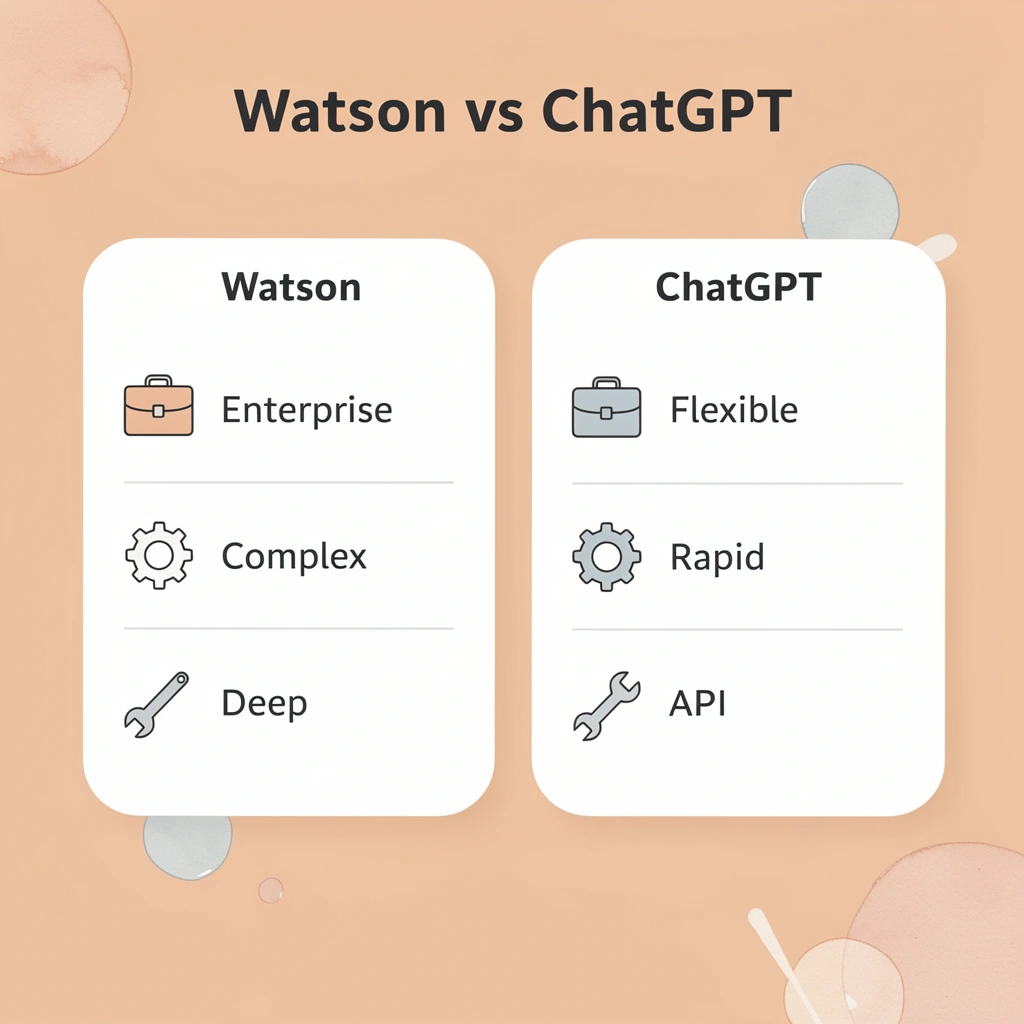

Watson improves structure; ChatGPT improves conversation. This fundamental difference matters more than feature lists or pricing tiers when choosing between them for enterprise voice operations.

Feature

IBM Watson

Primary Strength

Structured workflows & compliance

Best For

Regulated industries, complex routing

Integration

Deep enterprise system connectivity

Customization

Industry-specific templates

Compliance

Built-in regulatory frameworks

ChatGPT

Primary Strength

Natural conversation & flexibility

Best For

Dynamic interactions, creative responses

Integration

API-first, rapid deployment

Customization

Prompt engineering & fine-tuning

Compliance

Requires additional configuration

🎯 Key Point: The choice between Watson and ChatGPT isn't about which is objectively better—it's about which architectural approach aligns with your call center's specific operational needs and regulatory requirements.

"The most successful AI implementations in call centers focus on matching the technology's core strengths to the organization's primary use cases, rather than trying to force a one-size-fits-all solution." — Enterprise AI Research, 2024

🔑 Takeaway: Watson excels when you need predictable, compliance-heavy interactions with deep system integration, while ChatGPT shines when you want natural, conversational flexibility that can adapt to unexpected customer scenarios in real-time.

Core Purpose and Design Philosophy

IBM Watson was built for large enterprises requiring control over their operations. It began as a decision-support tool that analyzed structured data in healthcare, finance, and customer service. With Watsonx (launched in 2023), IBM shifted toward managing foundation models, emphasizing governance, compliance, and AI lifecycle management. The philosophy centers on trust through transparency: every model decision must be auditable, and every data point traceable.

What makes ChatGPT's approach fundamentally different?

ChatGPT came from a different direction. OpenAI designed it as a general-purpose language model for open-ended conversation without specialized training for specific fields. It excels at creative text generation, natural dialogue, and broad knowledge retrieval. The underlying belief is that conversational fluency unlocks value across use cases, not predefined workflows. ChatGPT Enterprise and API offerings brought it into corporate environments, but its foundation remains conversational flexibility, not enterprise governance.

Target Users and Accessibility

Watsonx serves business developers, data scientists, and industries requiring strict regulatory compliance. In healthcare, finance, and government, IBM's system provides detailed control and governance tools that satisfy auditors and legal teams. You can train custom models, deploy them on-premises or in a hybrid environment, and maintain the regulatory oversight these sectors demand.

How do accessibility and usability compare between platforms?

ChatGPT serves individual users, content creators, small businesses, and enterprise teams through ChatGPT Enterprise. According to IBM Watson vs ChatGPT Detailed Comparison, Watson implementations can achieve a 30% reduction in call handling time for structured support queries, but require significant upfront configuration. ChatGPT offers immediate usability: start conversations, integrate via API, or deploy across teams without consultation. The tradeoff is less control over model behavior and data governance.

Technology Architecture and Customization

Watsonx focuses on foundation model orchestration, helping you train, fine-tune, and deploy models while maintaining control over your data. IBM's modular architecture supports explainable AI, allowing you to trace how models arrive at specific outputs, which is critical for call centers handling sensitive customer data or compliance-heavy interactions. You can customize intent recognition, build domain-specific models, and run them in environments that meet your security requirements.

What technology architecture does ChatGPT use?

ChatGPT uses OpenAI's GPT architecture (GPT-4, GPT-4o, and newer versions), trained on internet data and refined through human feedback for tasks like answering questions, summarizing text, and writing code. You can customize it via the API, custom instructions, and plugins, though with limited control over implementation details. It runs on OpenAI's cloud servers unless deployed through Azure OpenAI Service for organizations requiring data security. The design prioritizes natural conversation over explainability.

Conversational Ability and NLP Performance

ChatGPT excels at understanding context, supporting multiple languages, and engaging in human-like conversations. For call centers handling complex customer questions, creative problem-solving, and dynamic interactions, ChatGPT's communication capabilities are essential. It generates empathetic responses, adapts its tone throughout conversations, and navigates ambiguous situations without interruption.

What are Watson's strengths in structured NLP tasks?

Watson offers solid task-based NLP for intent recognition, FAQ responses, and structured customer service routing. It can classify issues and trigger workflows based on customer input. However, it struggles when customers go off-script or ask questions that don't match predefined intents. Watson optimizes for predictable interactions; ChatGPT optimizes for conversational range.

How do modern platforms balance structure and flexibility?

Most call centers need both a structure for following rules and routing calls, as well as conversational flexibility when customers go off-script. Conversational AI platforms built for voice interactions balance these by combining intent recognition with natural language generation. They route structured queries through decision trees and hand off complex interactions to dialogue-trained models rather than classification systems.

Ecosystem Integration and Deployment

Watsonx works closely with IBM Cloud, Red Hat OpenShift, and enterprise platforms, handling older systems and hybrid cloud environments. IBM's ecosystem includes watsonx.ai for building models, watsonx.govern for tracking compliance, and watsonx.data for managing AI datasets.

This modular approach appeals to organizations that need AI to fit into their existing workflows rather than replace them. Watson's architecture handles mainframe systems and strict data residency requirements more effectively.

How does ChatGPT approach ecosystem integration?

ChatGPT integrates with many tools via APIs, plugins, and Microsoft products like Copilot in Office apps. Its system supports modern cloud infrastructure and prioritizes rapid deployment over custom governance.

What are the limitations of real-time voice interactions?

Neither tool was designed for real-time voice interactions at enterprise scale, where milliseconds of latency affect customer experience and every call must balance compliance with conversational quality.

IBM Watson vs ChatGPT: Which One Should Power Your Call Center Strategy

These AI systems improve different parts of call center operations. Choose based on which operational problem you're solving, not which technology is "better."

🎯 Key Point: The right AI choice depends on your specific operational needs, not the latest technology trends.

💡 Tip: Focus on measurable outcomes like customer satisfaction scores and resolution times rather than flashy AI features.

Decision Factor

IBM Watson

- Best For

Structured workflows - Implementation

Complex setup - Cost Model

Enterprise pricing - Customization

Deep integration

ChatGPT

- Best For

Conversational flexibility - Implementation

Rapid deployment - Cost Model

Usage-based pricing - Customization

API-based adaptation

"87% of call centers report that choosing AI based on operational fit rather than technology popularity leads to higher ROI and faster adoption." — Call Center Technology Report, 2024

When does Watson's structured approach work best?

Watson works well when customer interactions follow set patterns. If 70% of your calls involve account lookups, policy explanations, or status updates that align with existing knowledge bases, Watson's structured approach handles them efficiently. The system routes questions through predetermined decision trees, pulls answers from approved content libraries, and maintains the audit trails compliance teams require.

Why do financial services companies benefit from Watson's rigidity?

Financial services companies that process loan applications or insurance claims benefit from this strict structure. Every interaction must be documented and explained. Watson's architecture treats compliance as a built-in feature rather than an obstacle. The same inflexibility that frustrates customers with unusual requests protects you when regulators ask how your AI reached specific conclusions.

When should you choose ChatGPT for customer interactions?

ChatGPT works well when customers' needs don't fit standard templates. A shipping delay connected to a payment issue related to an address change from three weeks ago, with topic jumps, interruptions, and increasing emotions, requires understanding context across shifts, recognizing frustration without explicit keywords, and generating responses that fit the situation rather than scripted ones.

How does conversational flexibility reduce agent workload?

Retail and e-commerce operations face this constantly. According to Gartner's 2023 Customer Service and Support Leader Poll, 58% of service interactions involve complex, multi-issue questions requiring understanding across multiple systems.

ChatGPT's conversational flexibility reduces the need for agent intervention by recovering from misunderstandings and adapting when customers change direction. Platforms like Bland apply this adaptability to voice interactions, handling interruptions and topic changes naturally while maintaining context, thereby reducing transfers by addressing complex queries without rigid scripting.

When does structure optimization matter more than conversation quality?

Watson makes sense when your biggest cost driver is routing inefficiency, not call duration. If customers reach the wrong department 40% of the time, Watson's skills-based routing and intent classification reduce misdirected calls. If agents waste time searching disconnected systems for information, Watson's knowledge integration surfaces answers faster. The technology optimizes workflow structure, ensuring queries reach the right resource with proper context.

Which organizations benefit most from Watson's routing capabilities?

This matters most in large companies with specialized departments and complex internal processes. Healthcare systems that route patients among billing, scheduling, and clinical support need Watson's ability to classify intent accurately and route decisively. Getting people to the right place quickly reduces handle time more than making the AI sound friendlier. You're solving a logistics problem, not a communication problem.

How does ChatGPT handle the middle ground between automation and human intervention?

ChatGPT reduces escalations by handling the messy middle ground between simple automation and full agent intervention. Password resets and balance inquiries don't require it. Fraud disputes and technical troubleshooting demand human judgment instead.

But the 40% of calls that fall between these extremes—where customers need help slightly outside the script but not complex enough to justify a 15-minute agent call—is where ChatGPT creates capacity.

What makes workload reduction different from faster processing?

The system solves problems requiring explanation, even when the knowledge base lacks a perfect match. It keeps customers happy by handling small frustrations that would have prompted Watson to transfer the call.

Workload reduction comes from handling unclear situations, not from processing straightforward questions faster. The real choice is whether your call center needs structure optimization or conversation intelligence, assuming these general-purpose tools meet the requirements of modern voice interactions.

Related Reading

- Intercom Alternatives

- Zendesk Chat Vs Intercom

- IBM Watson Competitors

- Yellow.ai Competitors

- Kore.ai Competitors

- Liveperson Alternatives

- Intercom Vs Zopim

- Help Scout Vs Intercom

If IBM Watson and ChatGPT Are Not Enough, Here Is What Modern Call Centers Are Moving Toward

Watson and ChatGPT weren't built to replace calls. One improves workflows behind the scenes; the other generates text responses in support channels. Call centers need AI that answers calls, understands requests in real time, and resolves them without scripts or handoffs. This requires voice-first architecture, not text systems retrofitted for audio.

🎯 Key Point: Modern voice AI platforms process speech as it happens, recognize interruptions without breaking flow, and adjust tone based on conversation dynamics. Systems like conversational AI deploy agents that answer instantly, manage complex multi-turn dialogues, and scale without adding headcount or extending wait times. Our platform solves a different problem entirely: replacing the need for humans on every inbound call while maintaining the quality of live interaction.

"Call centers need AI that picks up the phone, understands requests in real time, and resolves them without scripts or handoffs."

💡 Tip: Test this in under five minutes. Book a demo to hear how these systems handle your real call scenarios. Compare that directly against Watson's rigid routing or ChatGPT's text-based responses. The gap becomes obvious the moment you hear it handle what neither legacy platform was designed to do.

⚠️ Warning: Legacy platforms like Watson and ChatGPT create bottlenecks when forced into voice-first environments they weren't designed for.